Beyond the Bottleneck: Addressing Tool Limitations to Advance In Vivo Research in 2025

This article addresses the critical challenges and innovative solutions associated with tools and methodologies in modern in vivo studies.

Beyond the Bottleneck: Addressing Tool Limitations to Advance In Vivo Research in 2025

Abstract

This article addresses the critical challenges and innovative solutions associated with tools and methodologies in modern in vivo studies. Targeting researchers and drug development professionals, it explores the foundational principles of in vivo research, details cutting-edge methodological applications from gene editing to nanomedicine, provides frameworks for troubleshooting and optimizing study design, and establishes rigorous standards for validation. By synthesizing recent advances, this guide aims to empower scientists to enhance the reliability, efficiency, and translational power of their preclinical in vivo work.

In Vivo Studies Explained: Core Principles, Current Limitations, and Why Tool Choice Matters

Frequently Asked Questions

Q1: What is the core difference between in vivo, in vitro, and ex vivo models?

- In vivo (Latin for "within the living") studies are conducted inside a whole living organism, such as an animal or human [1]. They capture the full complexity of physiological interactions.

- In vitro (Latin for "within the glass") studies are performed with biological components (like cells) in an artificial, controlled environment outside a living organism, such as a petri dish [2] [1].

- Ex vivo studies involve testing on living tissue that has been removed from an organism and maintained in an external environment that mimics natural conditions [3].

Q2: When should I prioritize using an in vivo model in my research?

In vivo models are often essential when your research question involves understanding complex, systemic interactions within an intact organism [3] [1]. Key scenarios include:

- Studying complex physiological processes, disease progression, or immune responses [1].

- Evaluating the overall efficacy, safety, and toxicology of a new drug candidate, including its absorption, distribution, metabolism, and excretion (ADME) [3] [1].

- Investigating behaviors or cognitive processes [1].

- Conducting mandatory preclinical trials required for regulatory approval before human clinical trials [1].

Q3: What are the main limitations of traditional in vitro models, and how can they be addressed?

Traditional 2D in vitro models, while offering high control and throughput, have significant limitations [2]:

- Simplified Environment: Cells grown in a flat, 2D layer on plastic do not experience the three-dimensional architecture, biomechanical forces, or cell-cell interactions of a living tissue, which can lead to artificial cell behavior [2].

- Poor Predictive Power: The artificial environment can diminish the model's ability to accurately predict human responses, potentially contributing to the high failure rate of drugs in human trials [4].

- Addressing the Gaps: Advanced systems like three-dimensional (3D) cell cultures and Organ-on-a-Chip technology are bridging this gap. These systems expose cells to more physiological conditions, including fluid flow, mechanical forces, and 3D structures, encouraging more natural cell behavior and improving translational value [2].

Q4: My ex vivo tissue is degrading during the experiment. How can I maintain its viability and integrity?

Maintaining tissue viability is the most critical challenge in ex vivo experiments [3]. Key strategies include:

- Optimizing the Culture System: Ensure the tissue is kept in a nutrient-rich medium that mimics its natural extracellular fluid and is maintained at the correct pH, temperature, and oxygen levels [3].

- Limiting Experiment Duration: Ex vivo tissues have a finite lifespan. Design your experiments to be as short as possible to minimize the effects of degradation [3].

- Viability Monitoring: Continuously monitor tissue health throughout the study using markers of cell death or functional assays specific to the tissue type [3].

Q5: What is an In Vitro-In Vivo Correlation (IVIVC) and why is it important in drug development?

An IVIVC is a predictive mathematical model that describes the relationship between a property of a dosage form measured in vitro (typically the drug dissolution rate) and a relevant in vivo response (such as the concentration of drug in the blood or the amount absorbed) [5] [6]. Its importance is twofold [5] [6]:

- Development & Regulation: It serves as a tool for optimizing formulations and can, in certain cases, reduce the need for additional human bioequivalence studies, especially when seeking approval for changes to a formulation.

- Predictive Power: A strong IVIVC increases confidence that the performance of a drug in laboratory tests will reliably predict its behavior in the human body.

Troubleshooting Guides

Challenge 1: Selecting the Right Model for Your Research Question

Choosing an inappropriate model can waste resources and yield misleading data. Use the following guide and workflow to make an informed decision.

Table: Model Selection Based on Research Objectives

| Research Objective | Recommended Model | Rationale |

|---|---|---|

| High-throughput drug screening | In Vitro | Allows for rapid, controlled testing of thousands of compounds on cell lines [2] [1]. |

| Studying a specific molecular pathway | In Vitro | Enables isolation and precise manipulation of variables in a simplified system [1]. |

| Assessing intestinal drug permeability | Ex Vivo (e.g., Using tissues) | Retains the complex intestinal epithelium and mucus layer, providing a more physiologically relevant barrier than single cell lines [3]. |

| Evaluating systemic drug efficacy & toxicity | In Vivo | Captures complex ADME processes and organ-system interactions in a whole organism [3] [1]. |

| Regulatory preclinical safety studies | In Vivo (though transitioning) | Currently required by regulators, but new approach methodologies (NAMs) are being phased in [7]. |

Challenge 2: Addressing the Translational Gap Between Models

A common frustration is when data from in vitro or animal models fails to predict human outcomes. This "translational gap" can be mitigated.

Strategy 1: Incorporate Human-Relevant Systems

- Action: Move beyond simple 2D cell cultures. Use primary human cells, co-cultures, 3D organoids, or Organ-on-a-Chip technology that better mimic human tissue structure and function [2].

- Rationale: These advanced in vitro systems expose cells to more natural cues, leading to more physiologically relevant gene expression and functionality.

Strategy 2: Establish a Robust In Vitro-In Vivo Correlation (IVIVC)

- Action: Develop a mathematical model linking your in vitro data (e.g., dissolution rate) to in vivo pharmacokinetic parameters (e.g., plasma drug concentration) [6].

- Rationale: A well-validated IVIVC can allow for future formulation changes and performance predictions with fewer in vivo studies, saving time and resources [5] [6].

Strategy 3: Leverage In Silico and New Approach Methodologies (NAMs)

- Action: Integrate computational modeling and simulation (e.g., PBPK - Physiologically Based Pharmacokinetic models) and data from human-relevant NAMs into your development pipeline [7] [8].

- Rationale: Regulatory agencies like the FDA are actively encouraging the use of human-based computer models and lab tests to supplement or replace animal data, which can improve predictive accuracy for human outcomes [7].

Challenge 3: Overcoming Technical Limitations in Ex Vivo Experiments

Problem: Rapid Loss of Tissue Viability

- Troubleshooting Steps:

- Validate Handling Procedures: Minimize the time between tissue extraction and the start of the experiment. Ensure dissection tools are sharp to avoid crushing the tissue.

- Optimize Culture Conditions: Use a perfusion system if possible to continuously deliver oxygen and nutrients and remove waste products, rather than static culture. Confirm that the osmolality, pH, and temperature of the medium are optimal for the specific tissue type.

- Monitor Integrity: Establish and use viability markers at the beginning and throughout the experiment. For intestinal transport studies, this could include measuring transepithelial electrical resistance (TEER) to confirm barrier integrity [3].

- Troubleshooting Steps:

Problem: High Variability in Ex Vivo Data

- Troubleshooting Steps:

- Standardize Sourcing: Source tissues from consistent suppliers and animal strains with defined characteristics (age, sex, genetic background).

- Control Pre-experiment Variables: Standardize animal housing conditions, fasting periods, and dissection protocols across all experiments.

- Increase Sample Size: Account for inherent biological variability by using an adequate number of tissue replicates in each experimental group.

- Troubleshooting Steps:

The Scientist's Toolkit: Essential Research Reagent Solutions

Table: Key Materials for Intestinal Permeability and Drug Transport Studies

| Research Reagent / Material | Function in Experiment | Key Considerations |

|---|---|---|

| Caco-2 Cell Line | A human colon carcinoma cell line that spontaneously differentiates into enterocyte-like cells. Forms a polarized monolayer with tight junctions, used as a standard in vitro model for predicting human intestinal drug permeability [3]. | Requires long culture time (~21 days) to fully differentiate. Primarily models absorptive enterocytes, not other cell types. |

| Madin-Darby Canine Kidney (MDCK) Cell Line | A canine kidney cell line that forms tight junctions rapidly. Often used as a faster, high-throughput alternative to Caco-2 for permeability screening [3]. | Species difference (canine vs. human). Can be transfected with human transporters for more specific studies. |

| Using Chamber | An ex vivo apparatus for measuring the short-circuit current and electrical resistance across a segment of intact tissue (e.g., intestinal mucosa) [3]. | Directly measures ion and drug transport across native tissue. Critical for validating findings from cell-based models but requires fresh, viable tissue. |

| Transport Buffers (e.g., Hanks' Balanced Salt Solution, HBSS) | A balanced salt solution that maintains pH and osmotic balance, providing a physiologically relevant environment for cells or tissues during transport assays [3]. | Often supplemented with glucose for energy and may require a gassing cycle (e.g., with O₂/CO₂) for ex vivo tissues. |

| Biorelevant Media (e.g., FaSSIF/FeSSIF) | Simulated intestinal fluids that mimic the fasting (FaSSIF) and fed (FeSSIF) state in the human gut. Contains bile salts and phospholipids [6]. | Crucial for obtaining meaningful dissolution and permeability data for poorly soluble drugs, as solubility is often the rate-limiting step for absorption. |

Experimental Protocol: Establishing an In Vitro Intestinal Permeability Model

This protocol outlines the key steps for using the Caco-2 cell model to assess a drug candidate's permeability, a common experiment in early drug development [3].

Detailed Methodology:

- Cell Seeding: Seed Caco-2 cells at a high density (e.g., 100,000 cells/cm²) onto the semi-permeable membrane of a transwell insert. The membrane sits in a well plate, creating an apical (top) and basolateral (bottom) compartment.

- Cell Differentiation: Culture the cells for approximately 21 days, changing the culture medium every 2-3 days. During this period, the cells proliferate and differentiate to form a tight, polarized monolayer that mimics the intestinal epithelium.

- Integrity Check: Before the experiment, measure the Transepithelial Electrical Resistance (TEER) of the monolayers using a volt-ohm meter. Accept only inserts with a high TEER value (e.g., >300 Ω·cm²) as this indicates well-formed tight junctions and a intact barrier.

- Drug Application: Add the drug compound dissolved in an appropriate transport buffer (e.g., HBSS) to the donor compartment. For absorption studies, this is typically the apical side (A-to-B). For efflux studies, it is the basolateral side (B-to-A).

- Sample Collection: At predetermined time points (e.g., 30, 60, 90, and 120 minutes), take samples from the receiver compartment. Replace the volume with fresh pre-warmed buffer to maintain sink conditions.

- Analytical Quantification: Analyze the concentration of the drug in the samples using a sensitive analytical method such as High-Performance Liquid Chromatography (HPLC) or Liquid Chromatography with Tandem Mass Spectrometry (LC-MS/MS).

- Data Analysis: Calculate the Apparent Permeability Coefficient (Papp) using the formula:

Papp (cm/s) = (dQ/dt) / (A × C₀)wheredQ/dtis the transport rate (µg/s),Ais the surface area of the membrane (cm²), andC₀is the initial concentration in the donor compartment (µg/mL). Compare the Papp values to known standards to classify the drug's permeability (e.g., high vs. low).

Frequently Asked Questions (FAQs)

Q1: What are the most common sources of variability and bias in in vivo experiments, and how can I mitigate them? Common sources include improper animal model selection, non-randomized group assignment, unblinded procedures, and insufficient sample sizes. To mitigate these:

- Refine Animal Model Selection: Choose species and genetic backgrounds that best match your specific research question and the human disease context. Using an inappropriate model is a primary cause of failed experiments and difficulties in extracting useful insights [9].

- Implement Randomization and Blinding: Even with congenic strains, biological variation exists. Randomization reduces selection bias, while blinding ensures researchers do not handle animals differently based on treatment groups, preventing biased results [9].

- Ensure Proper Sample Size: Conduct power analyses to determine the correct sample size. This is crucial for obtaining statistically valid conclusions, adhering to ethical standards, and effectively planning for blinding and randomization [9].

Q2: My translational data is complex and multi-faceted. How can I better structure it for analysis and sharing? Adopting data science best practices is key to unlocking the potential of complex in vivo data [10].

- Use the Smallest Experimental Unit: Structure your dataset so that each row represents the smallest experimental unit (e.g., a single animal). This allows for greater flexibility in future analysis compared to only entering group means [10].

- Include Rich Metadata: Capture comprehensive details about demographics, physiology, procedures, and environment. Use consistent naming conventions and include as many data points as possible during initial compilation to avoid revisiting source files later [10].

- Prioritize Raw Data: Aggregate non-normalized raw data (e.g., actual weights in grams) before including any normalized values (e.g., percentage change). This preserves data integrity and allows for multiple analytical approaches downstream [10].

Q3: Are there emerging technologies that can help overcome the limitations of traditional in vivo models? Yes, several innovative technologies are bridging the translational gap:

- Artificial Intelligence (AI) and Machine Learning: AI can transform in vivo study design by distilling essential information from vast literature, helping researchers choose the most relevant and up-to-date animal models and assays. It is also being used to predict pharmacokinetics, identify biomarkers, and optimize clinical trial design [11] [9].

- Organ-on-Chip Technology: These are advanced in vitro systems that use human cells and microfluidic perfusion to simulate organ-level biology. They provide a more human-relevant, ethical, and mechanistically insightful alternative for research in drug discovery, toxicology, and infectious disease modeling [12].

- Image-Guided Injection Systems: Technologies like the VivoJect system use real-time ultrasound imaging to enable precise, minimally invasive delivery of cells or therapies in animal models. This improves accuracy, reduces procedural complications and variability, and aligns with the ethical principles of the 3Rs (Reduction, Refinement, Replacement) [13].

Q4: What are the key regulatory and practical challenges in moving a device from research to clinical use? Two major, interrelated challenges exist [14]:

- Scientific Understanding: A device must provide measurements that are thoroughly understood in the context of the underlying clinical problem. It is critical to know what the device is actually measuring—including the timeframe and tissue volume of the measurement—relative to the dynamic pathophysiological processes of interest [14].

- Regulatory and Design Hurdles: The path to regulatory approval (e.g., through the FDA) is long and intricate. The device must solve an important unmet clinical need and be designed with technical and user-based considerations in mind to minimize risk and optimize usability and acceptability [14]. Addressing these challenges from the beginning makes the process more efficient.

Troubleshooting Guides

Problem: High Variability in Experimental Outcomes

Possible Causes and Solutions:

| Cause | Solution | Key Considerations |

|---|---|---|

| Inappropriate Animal Model | Conduct a thorough literature review using AI tools to select a species and genetic background with high translational relevance for your specific disease [9]. | The ideal model is a suitable match for the tests and assays performed. Staying updated avoids using outdated, suboptimal models [9]. |

| Inadequate Experimental Design | Implement strict randomization and blinding procedures for all in vivo experiments [9]. | Randomization reduces selection bias; blinding prevents operator-induced bias during procedures and outcome assessment [9]. |

| Poorly Defined Data Structure | Structure data at the per-animal level, include rich metadata, and aggregate raw, non-normalized values to enable robust statistical analysis and data sharing [10]. | Entering data with the highest granularity possible allows for greater manipulation and more powerful data science applications [10]. |

Problem: Difficulty in Analyzing and Validating Complex Data from New Approaches

Possible Causes and Solutions:

| Cause | Solution | Key Considerations |

|---|---|---|

| Lack of Accessible Tools for Virtual Cohorts | Utilize open-source statistical platforms, like the R-Shiny web application developed by the SIMCor project, to validate virtual cohorts and analyze in-silico trial data [15]. | This specific tool provides a menu-driven, practical platform for comparing virtual cohorts with real datasets, supporting the wider adoption of in-silico methods [15]. |

| Challenges in Interpreting AI/ML Predictions | Employ explainable AI (XAI) techniques, such as SHAP analysis, to interpret supervised machine learning model predictions. This builds clinical trust and facilitates adoption [11]. | Demonstrating the impact of specific features on a model's output helps clinicians understand and trust the predictions, moving from a "black box" to an interpretable tool [11]. |

Research Reagent Solutions: Essential Tools for Modern Translational Research

The following table details key computational and methodological "reagents" essential for designing and analyzing robust translational studies.

| Tool / Solution | Function | Application in Translational Research |

|---|---|---|

| AI for Model Selection | Distills information from scientific literature to recommend optimal and up-to-date animal models and assays [9]. | Ensures experimental models are relevant, improves translational potential, and avoids using outdated models criticized by peers [9]. |

| Open-Source Statistical Web App | Provides a user-friendly R-Shiny environment for validating virtual cohorts and analyzing in-silico trials [15]. | Enables researchers to statistically compare virtual and real patient data, facilitating the use of in-silico trials to reduce, refine, and replace traditional clinical/animal studies [15]. |

| eXtreme Gradient Boosting (XGBoost) | A powerful machine learning algorithm for supervised learning tasks like classification and regression [11]. | Used for biomarker-based patient stratification, predicting treatment responses, and optimizing trial design through high-accuracy prediction models [11]. |

| Image-Guided Injection System | Combines real-time ultrasound imaging with automated injection for precise delivery in animal models [13]. | Increases precision of injections, reduces invasiveness and variability, improves animal welfare (Refinement), and minimizes the number of animals needed (Reduction) [13]. |

| Organ-on-Chip Platform | Advanced in vitro model using human cells and microfluidics to simulate organ-level structure and function [12]. | Serves as a human-relevant, ethical alternative for drug screening, toxicity testing, and disease modeling, helping to bridge the species gap [12]. |

| SHAP (SHapley Additive exPlanations) | A method to explain the output of any machine learning model, showing how each feature contributes to the prediction [11]. | Critical for building clinical trust in AI models by making their decisions interpretable, such as explaining the risk factors for an adverse event [11]. |

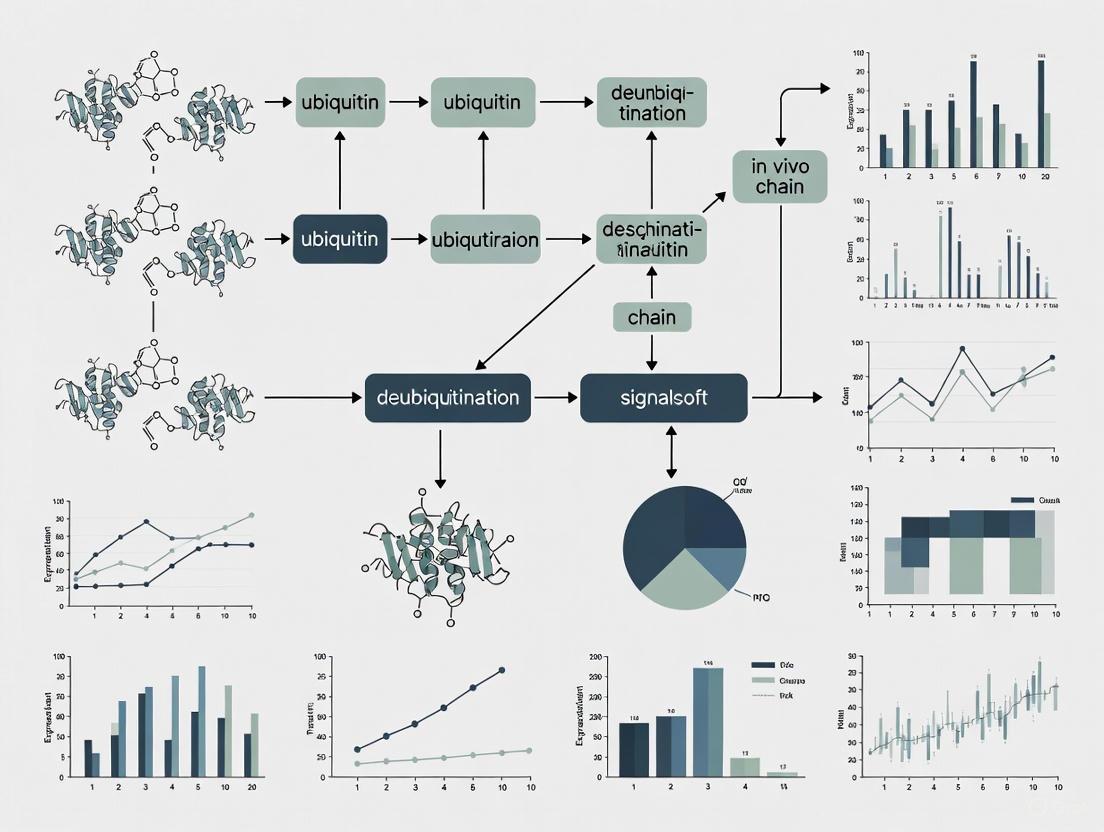

Experimental Workflows and Pathways

Workflow: Traditional vs. AI-Enhanced In Vivo Study Design

Workflow: Pathway for Translating an In Vivo Device to Clinical Use

Technical Support Center: Troubleshooting In Vivo Research

This technical support center provides targeted troubleshooting guides and FAQs to help researchers overcome common experimental challenges in in vivo chain studies, directly supporting a broader thesis on addressing methodological limitations in this field.

Troubleshooting Guides

Table 1: Troubleshooting In Vivo Efficacy Studies

| Problem Area | Specific Issue | Potential Cause | Recommended Solution |

|---|---|---|---|

| Tumor Models | Low tumor take rate or highly variable tumor growth [16] | Cell line-specific characteristics or improper inoculation techniques [16] | Conduct a pilot tumor growth study to characterize the take rate and growth profile before therapeutic evaluation [16]. |

| Dosing | Inability to determine an effective or safe dosing regimen [16] | Missing preliminary pharmacokinetic and toxicity data [16] | Perform dose-range finding studies to determine the Maximum Tolerated Dose (MTD) and optimal schedule prior to efficacy experiments [16]. |

| Experimental Controls | Ambiguous experimental results; unable to isolate nanoparticle effect [16] | Lack of appropriate control groups [16] | Include relevant controls: standard-of-care, free (unformulated) drug, vehicle (formulation), and unloaded nanoparticle control [16]. |

| Molecular Weight Analysis (GPC/SEC) | Inaccurate molecular weight (Mn, Mw) results [17] | Poor sample preparation or incorrect calibration standards [17] | Use high-purity solvents, filter samples through a 0.2–0.45 µm filter, and choose calibration standards structurally close to your polymer. For complex polymers, use universal calibration or MALS detectors [17]. |

| Chromatography (GC) | Loss of chromatographic efficiency (broader peaks) [18] | Column installation issues, contamination, or incorrect carrier gas linear velocity [18] | Ensure proper column installation and positioning, trim the inlet end if contaminated and re-install. Verify and set the correct carrier gas linear velocity for your column dimensions [18]. |

Frequently Asked Questions (FAQs)

Q1: How do I determine the correct number of animals to use in my in vivo efficacy study to ensure statistically significant results? [16]

A: The sample size depends on the variability in tumor growth/survival and the anticipated magnitude of the treatment response. A pilot study is necessary to estimate this variability. For tumor volume data (a continuous variable), a simplified sample size estimate is given by:

n = 1 + 2 * C * (s/d)^2

Where s is the standard deviation, d is the anticipated difference between control and treatment response, and the constant C is 7.85 (assuming a type I error of 5% and type II error of <20%). For implanted tumor models with a potent treatment, the sample size is generally not greater than 10 animals per group [16].

Q2: What are the appropriate statistical methods for analyzing tumor volume and survival data from my study? [16] A:

- Tumor Volume and Body Weight: Data can be plotted as mean ± standard deviation. Statistical differences between groups over time, with equal sample sizes, can be determined using ANOVA with post-hoc comparisons (e.g., Dunnett's Test for comparison to control, or Duncan's Test for comparisons between all groups). For unequal sample sizes (common in survival studies), use ANOVA with Tukey's HSD Test [16].

- Survival/Time-to-Endpoint Data: Group survival data are best analyzed by Kaplan-Meier analysis, with the log-rank (Mantel-Cox) test to determine statistical significance. This method allows for censoring of data, such as removing non-neoplasia-related endpoints from the analysis [16].

Q3: My polymer nanoparticle's molecular weight distribution seems inaccurate in GPC. What are the most common sources of error? [17] A: The top mistakes in Gel Permeation Chromatography (GPC) are:

- Choosing the Wrong Column and Detector: Using a column calibrated for one polymer type (e.g., polystyrene) to analyze another (e.g., PEG) leads to inaccurate results.

- Poor Sample Preparation: Incomplete dissolution of the sample or the presence of dust/particulates can block the column and create strange peaks.

- Using the Wrong Calibration Standards: Using a standard that does not structurally match your polymer will give wrong molecular weight results, especially for branched polymers.

- Ignoring System Maintenance: Dirty columns or detector drift lead to unstable and unreliable data.

Q4: What are the essential controls for a study testing a targeted nanoformulated drug? [16] A: To properly interpret the results of a targeted nanoformulation, your study should include:

- The nanoformulated API at multiple concentrations.

- A clinical formulation of the API (standard-of-care) at an equitoxic and equivalent dose.

- A vehicle control (the formulation without the active drug).

- An unloaded nanoparticle control (without the API).

- Crucially, an untargeted version of the nanoformulation (without the ligand) to specifically identify and validate any targeting advantages [16].

Detailed Experimental Protocols

Protocol 1: Designing an In Vivo Efficacy Study for a Nanoformulated Anticancer Agent

This protocol outlines key steps for establishing a robust in vivo efficacy model, based on guidance from the National Cancer Institute's Nanotechnology Characterization Laboratory (NCL) [16].

1. Pre-Study Justification and Approval:

- All animal protocols must be approved by an Institutional Animal Care and Use Committee (IACUC). Scientifically justify that the work is not an unnecessary duplication of previous research [16].

- Test all cancer cell lines for human and rodent pathogens prior to use [16].

2. Model and Route Selection:

- Select a tumor model relevant to your drug's mechanism (e.g., subcutaneous xenograft, orthotopic, metastatic) [16].

- The route of drug administration (e.g., intravenous tail vein injection) should reflect the anticipated clinical route [16].

3. Preliminary Studies:

- Pilot Tumor Growth Study: Conduct a small pilot study to characterize the tumor take rate and growth profile for your specific cell line and mouse strain [16].

- Dose-Range Finding: Perform studies to determine the Maximum Tolerated Dose (MTD), defined as the dose producing >20% body weight loss in 10% of animals. This is critical for setting equivalent and equitoxic doses for your nanoformulation and control groups [16].

4. Animal Randomization and Dosing:

- Once tumors are palpable, randomize animals based on tumor volume and body weight to ensure no initial bias between treatment groups [16].

- Dosing should be based on the initial dose-range finding study.

5. Data Collection and Analysis:

- Monitor and record tumor volumes and body weights regularly.

- For survival studies, define clear endpoints (e.g., tumor diameter ≥2 cm, loss of ≥20% initial body weight) [16].

- Analyze data using appropriate statistical methods as detailed in the FAQs above [16].

Protocol 2: Accurate Molecular Weight Determination of Polymers via Gel Permeation Chromatography (GPC)

This protocol consolidates best practices to ensure reliable polymer molecular weight data, critical for characterizing nanoformulated carriers [17].

1. System Setup and Calibration:

- Column Selection: Choose a column with a pore size and chemistry suitable for your polymer's molecular weight range and structure [17].

- Detector Selection: For absolute molecular weight without relying on polymer standards, use Multi-Angle Light Scattering (MALS) or viscometry detectors [17].

- Calibration: Use calibration standards that are structurally close to your polymer. For branched or complex polymers, employ universal calibration or MALS for accurate results [17].

2. Sample Preparation:

- Solvent: Use clean, high-purity solvents suitable for your polymer [17].

- Dissolution: Gently heat or sonicate the solution to ensure the polymer is fully dissolved [17].

- Filtration: Filter the dissolved sample through a 0.2–0.45 µm PTFE filter to remove any dust or undissolved material that could block the column [17].

3. System Maintenance and Operation:

- Regularly clean and maintain the GPC system, including changing mobile phases to avoid contamination [17].

- Monitor system pressure and detector baseline before each run [17].

4. Data Interpretation:

- For multi-detector systems (RI, MALS, Viscometer), use specialized software and consult with trained professionals for correct data interpretation [17].

Experimental Workflow Visualization

In Vivo Efficacy Study Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function & Application | Key Considerations |

|---|---|---|

| Syngeneic Tumor Models (e.g., B16, 4T1) [16] | Immunocompetent models for studying tumor-immune system interactions and immunotherapy efficacy. | Tumor growth variability should be characterized in a pilot study prior to therapeutic evaluation [16]. |

| Xenograft Models (e.g., LS174T, MDA-MB-231) [16] | Human tumor cells grown in immunodeficient mice; standard for testing human-specific therapies. | Cell lines must be tested for pathogens prior to use. An untargeted nanoformulation control is needed for targeted therapies [16]. |

| Orthotopic Metastatic Models (e.g., MDA-MB-231-Luc) [16] | Tumor cells implanted in their native organ site (e.g., breast cancer in mammary pad); models natural metastasis. | Utilize non-invasive imaging (e.g., bioluminescence) to track tumor growth and metastasis in real-time [16]. |

| Multi-Angle Light Scattering (MALS) Detector | Used with GPC for absolute molecular weight determination of polymers without need for column calibration [17]. | Provides accurate data for complex polymers (branched, structured). Requires proper training for data interpretation [17]. |

| Universal Calibration (GPC) | A calibration method based on hydrodynamic volume, offering more accurate MW for polymers unlike the standards [17]. | More accurate than traditional calibration when analyzing polymers for which matched standards are not available [17]. |

| Cryoscopic Apparatus | Determines molecular weight of small molecules by measuring the freezing point depression of a solvent [19]. | Best for non-ionic solutions. Common solvents include benzene (Kf=5.12) and camphor (Kf=39.7) [19]. |

FAQs and Troubleshooting Guides

This technical support resource addresses common challenges researchers face when integrating Artificial Intelligence (AI) and human-relevant data into preclinical and translational studies. The guidance is framed within the broader thesis of overcoming the limitations of traditional in vivo models.

AI Implementation and Data Management

Q1: Our AI models for toxicity prediction are performing poorly on new chemical entities. What could be the issue? A common cause is model drift or encountering out-of-distribution data, where new data differs significantly from the training set [11]. To troubleshoot:

- Audit your data pipeline: Ensure the data distribution of new compounds is similar to your training set. Implement statistical tests (e.g., Kolmogorov-Smirnov) for feature comparison.

- Retrain with expanding datasets: Continuously incorporate new, high-quality data from human-relevant models like Organ-Chips to improve generalizability [20].

- Implement uncertainty quantification: Use techniques like Monte Carlo dropout to flag predictions where the model has low confidence [11].

Q2: How can we address the "black box" nature of complex AI models to satisfy regulatory requirements for drug submissions? Regulators like the FDA emphasize model interpretability and credibility [21]. Solutions include:

- Leverage explainable AI (XAI) techniques: Integrate tools like SHAP (SHapley Additive exPlanations) to quantify the contribution of each input feature to a prediction. A tutorial on SHAP analysis is available for drug development applications [11].

- Adopt a risk-based framework: Follow the FDA's draft guidance (Jan 2025) which outlines a risk-based credibility assessment for AI models supporting regulatory decisions [21].

- Maintain rigorous documentation: Keep detailed records of model design, training data, validation protocols, and performance metrics throughout the entire lifecycle [21].

Q3: What are the best practices for managing sensitive human data in AI-driven research? Protecting patient privacy while enabling research is critical.

- Use privacy-preserving techniques: Employ federated learning, where the AI model is shared and trained locally at each data source (e.g., hospital), and only model updates are aggregated. This "drastically reduces privacy concerns" by not moving patient data [21].

- Ensure robust data governance: Implement strict access controls, data anonymization, and encryption in line with HIPAA and GDPR [21].

- Consider synthetic data: For initial model development and testing, use high-quality synthetic data generated to mimic real datasets without privacy risks [21].

Human-Relevant Models and Technologies

Q4: Our organization is interested in using Organ-on-a-Chip technology, but we are concerned about throughput and reproducibility. What solutions exist? Traditional barriers to adoption are being overcome with new integrated systems.

- Adopt integrated emulation systems: Platforms like the AVA Emulation System are designed as self-contained workstations that support up to 96 Organ-Chip emulations in a single run, enabling higher throughput and harmonized datasets [22].

- Automate workflows: Integrate systems with robotic liquid handlers and built-in real-time imaging to minimize manual intervention and improve reproducibility [22].

- Validate against known compounds: Build internal confidence by running a set of compounds with well-characterized human toxicity profiles to benchmark the system's predictive performance [22].

Q5: How can we effectively integrate data from human-relevant models (e.g., Organ-Chips, perfused organs) with AI for better prediction? Creating a unified data stack is key to unlocking insights.

- Build a Human Data Stack: Create an integrated data layer that contextualizes information from various sources, such as patient medical records, Organ-Chip outputs, and perfused organ data. Companies like Revalia Bio are pioneering such platforms to power "Phase 0 Human Trials" [20].

- Use AI as the integrative layer: Apply machine learning to unify these disparate, high-fidelity human datasets and uncover insights into human physiology that no single approach could reveal alone [23].

- Focus on data standardization: Ensure data from human-relevant models is captured in consistent, machine-readable formats to facilitate seamless integration with AI/ML pipelines.

Experimental Protocols for Key Methodologies

Protocol 1: AI-Assisted Diagnostic and Pathogen Identification Using Gram Stain Images

This protocol outlines the use of a pre-trained Convolutional Neural Network (CNN) to identify bacteria from Gram-stain slides, a method demonstrated with approximately 95% accuracy in classifying image sections [24].

- 1. Sample Preparation: Prepare Gram-stain slides from positive blood culture samples following standard clinical microbiological protocols [24].

- 2. Image Acquisition and Preprocessing:

- Use a high-resolution digital microscope to capture images of the slides.

- Automatically crop the images into multiple smaller sections to create a large dataset of image crops for training and validation. The cited study used approximately 100,000 classified image sections [24].

- 3. Model Adaptation and Training:

- Select a pre-trained CNN (e.g., models originally designed for ImageNet classification).

- Modify the final layers of the network to correspond to your bacterial classification categories (e.g., Gram-positive cocci in clusters, Gram-negative rods).

- Train the model on the dataset of cropped and classified images, using a standard split (e.g., 80/20 for training and validation).

- 4. Validation and Deployment:

- Validate the model's performance on a held-out test set of whole slides, measuring accuracy and other relevant metrics. The cited study achieved 92.5% accuracy on whole slides [24].

- Integrate the validated model into the clinical or research workflow for rapid, AI-assisted pathogen identification.

Protocol 2: Establishing a Human-Relevant Liver Model for Predictive Toxicology

This protocol describes the use of Liver-Chip models to predict drug-induced liver injury (DILI), a leading cause of drug failure. These models have been shown to outperform conventional animal and hepatic spheroid models [20] [22].

- 1. System Setup:

- Use a commercial Liver-Chip system (e.g., from Emulate). These are microfluidic devices lined with living human liver cells and blood vessel cells, designed to mimic organ-level physiology [20].

- 2. Cell Culture and Maintenance:

- Seed primary human hepatocytes and endothelial cells into the respective channels of the chip following the manufacturer's instructions.

- Maintain the chips in a controlled environment, perfusing them with cell-specific culture media to ensure long-term viability and functionality.

- 3. Compound Dosing and Experimentation:

- Introduce the drug candidate into the chip's vascular channel at a range of clinically relevant concentrations.

- Run experiments with multiple chips per condition to ensure statistical power. New integrated systems allow for dozens of chips to be run in parallel [22].

- 4. Endpoint Analysis:

- Biomarker Sampling: Regularly collect effluent from the chip's channels to measure biomarkers of injury, such as albumin, urea, and liver enzymes (ALT, AST).

- Imaging: Use integrated or external microscopes to monitor cell morphology and viability in real-time [22].

- Transcriptomics/Proteomics: (Optional) Lyse the chips at the endpoint for downstream omics analysis to investigate mechanisms of toxicity.

- 5. Data Integration:

- Compare the results from the Liver-Chip to historical data from animal models and known human outcomes.

- Use the human-relevant data generated to build or validate AI models for DILI prediction, creating a more reliable tool for future compound prioritization [20].

The following tables consolidate key quantitative data on AI adoption and the performance of new research methodologies.

Table 1: AI Adoption and Impact in Life Sciences (2025 Survey Data)

| Metric | Finding | Source |

|---|---|---|

| Organizations using AI | 88% report regular use in at least one business function | [25] |

| AI Scaling Status | Nearly two-thirds (≈65%) are in experimentation or piloting phases, not yet scaling across the enterprise | [25] |

| Top Implementation Barrier | Nearly 80% of respondents cited a lack of in-house AI expertise as the top barrier | [21] |

| Enterprises with EBIT impact from AI | 39% report some level of enterprise-wide EBIT impact from AI use | [25] |

| AI high performers | About 6% of organizations are "AI high performers," seeing significant value and EBIT impact >5% | [25] |

Table 2: Performance of Human-Relevant Models and AI in R&D

| Model/Application | Key Performance Metric | Context/Source |

|---|---|---|

| Human Liver-Chip | Better predicted drug-induced liver injury (DILI) than animal and hepatic spheroid models | Validated study paving the way for FDA's ISTAND program [22] |

| AVA Emulation System | Reduces cost per sample by >75% compared to earlier Organ-Chip models | Enables broader adoption in academia and industry [22] |

| AI for Gram Stain Classification | ≈95% accuracy classifying image crops; 92.5% accuracy classifying entire slides | Study using a pre-trained CNN on 100,000 image sections [24] |

| AI for Blood Culture Prediction | AUC of 0.99 (ROC) and 0.82 (precision-recall) for predicting outcomes in ICU patients | Bidirectional LSTM model using 9 clinical characteristics over time [24] |

| Traditional Drug Development | 90% failure rate for candidates entering clinical trials | Highlights the insufficiency of traditional animal models [22] |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Human-Relevant AI-Driven Research

| Item | Function in Research |

|---|---|

| Organ-on-a-Chip Systems (e.g., Liver-, Kidney-Chip) | Microfluidic devices lined with living human cells to emulate human organ physiology and predict drug safety/efficacy in a human-relevant context [20] [22]. |

| Organ Perfusion Systems | Technology to maintain donated human organs in a living state ex vivo for hours or days, creating a platform for highly physiologically relevant drug testing and data generation [23]. |

| Federated Learning Software Frameworks | Enable collaborative AI model training across multiple institutions (e.g., different hospitals) without sharing or moving sensitive raw patient data, thus addressing key privacy concerns [21]. |

| Explainable AI (XAI) Tools (e.g., SHAP, LIME) | Provide post-hoc interpretations of complex AI model predictions, identifying which input features drove a specific output. Critical for building trust and meeting regulatory expectations [11]. |

| Cloud-Based Analytics Pipelines (e.g., on AWS/Azure) | Scalable infrastructure for storing and processing the large, complex datasets generated by human-relevant models and multi-omics analyses, facilitating RWE generation and AI integration [11]. |

Workflow and Signaling Pathway Visualizations

AI Integration in Drug Discovery Workflow

AI-Assisted Diagnostic Pathway for Infections

Data Integration Stack for Human-Relevant Prediction

Advanced In Vivo Tools in Action: From mRNA Delivery to Digital Biomarkers

FAQs: Core Concepts and System Selection

What are the primary advantages of using mRNA over DNA for in vivo gene therapy? mRNA offers several key advantages: it does not need to enter the cell nucleus to function, thereby eliminating the risk of insertional mutagenesis into the host genome [26]. Its activity is transient, allowing for easier regulation of protein production and reducing the risk of long-lasting side effects [26]. The process is also cost-effective and simpler for mass production [26].

How does CRISPR-Cas9 ribonucleoprotein (RNP) delivery compare to plasmid DNA delivery? RNP delivery, where pre-assembled complexes of Cas9 protein and guide RNA are delivered, is often preferred over plasmid DNA. RNPs are immediately active upon delivery, which leads to increased editing precision, reduced off-target effects, and lower cytotoxicity compared to plasmids, which require transcription and translation within the cell [27].

What are the main challenges associated with viral vectors for in vivo delivery? While viral vectors like AAVs offer high transduction efficiency, they face significant challenges. These include immunogenicity, the risk of insertional mutagenesis, and limited cargo capacity [26] [28]. AAVs, for instance, have a payload limit of about 4.7 kb, which is too small for the standard SpCas9 nuclease, sgRNA, and a donor template without sophisticated workarounds [27].

Why are Lipid Nanoparticles (LNPs) a popular non-viral delivery system? LNPs are synthetic nanoparticles that protect their mRNA or CRISPR cargo from degradation and facilitate cellular entry [26]. They gained prominence during the COVID-19 pandemic for mRNA vaccine delivery and are attractive due to their minimal safety and immunogenicity concerns (lacking viral components), their ability to deliver various cargo types (DNA, mRNA, RNP), and the ongoing development of organ-targeted LNP formulations [27].

Troubleshooting Common Experimental Problems

Low Editing Efficiency

- Problem: The CRISPR-Cas9 system is not efficiently editing the target site.

- Solutions:

- Verify gRNA Design: Ensure your gRNA sequence is unique to the target and has a high on-target score. Use established design tools that predict and minimize off-target sites [29].

- Optimize Delivery Method: Different cell types require different delivery strategies. Test alternative methods such as electroporation or lipofection, and optimize conditions for your specific cell type [29].

- Check Cargo Form: If using plasmid DNA, consider switching to mRNA or RNP, which can lead to faster and more efficient editing [27].

- Confirm Promoter and Codon Usage: Ensure the promoter driving Cas9/gRNA expression is active in your target cells. Codon-optimization of the Cas9 gene for your host organism can significantly improve expression levels [29].

High Off-Target Effects

- Problem: The Cas9 nuclease cuts at unintended genomic sites, leading to unwanted mutations.

- Solutions:

- Use High-Fidelity Cas Variants: Employ engineered Cas9 variants (e.g., high-fidelity SpCas9) or alternative nucleases like Cas12Max that are designed to reduce off-target cleavage [27] [29].

- Refine gRNA Selection: Utilize computational algorithms to design gRNAs with high specificity. These tools score guides based on their potential for off-target activity, helping you select the best candidate [30].

- Delivery Optimization: Deliver CRISPR components as RNP complexes. The transient nature of RNP activity can shorten the editing window, limiting opportunities for off-target cleavage [27].

- Leverage Virus-Like Particles (VLPs): VLPs enable transient delivery of CRISPR components, which reduces the possibility of long-term expression and subsequent off-target editing [27].

Cell Toxicity and Low Viability

- Problem: Cells experience death or low survival rates after CRISPR-Cas9 delivery.

- Solutions:

- Titrate Component Concentration: High concentrations of CRISPR components can be toxic. Start with lower doses and titrate upwards to find a balance between effective editing and cell viability [29].

- Switch Cargo Type: Plasmid DNA can cause higher cytotoxicity and immune responses. Switching to RNP delivery can mitigate this toxicity [27].

- Utilize Nuclear Localization Signals (NLS): Using a Cas9 protein with an NLS can enhance nuclear import and targeting efficiency, allowing you to use lower overall doses and reduce cytotoxicity [29].

Experimental Protocols for Key Workflows

Protocol: Lipid Nanoparticle (LNP) Mediated mRNA Delivery

Objective: To efficiently deliver mRNA cargo to target cells in vivo using LNPs.

- mRNA Preparation: Synthesize and purify the mRNA of interest (e.g., coding for Cas9). Include a 5' cap (e.g., CleanCap) and a 3' poly(A) tail to enhance stability and translation [26] [31].

- LNP Formulation: Prepare a lipid mixture typically containing an ionizable lipid, phospholipid, cholesterol, and a PEG-lipid in an ethanol solution.

- Nanoparticle Formation: Mix the aqueous mRNA solution with the lipid ethanol solution using a microfluidic device. This rapid mixing leads to the self-assembly of LNPs encapsulating the mRNA.

- Dialysis and Purification: Dialyze the LNP formulation against a buffer to remove ethanol and achieve the desired pH. Then, filter-sterilize the final product.

- In Vivo Administration: Administer the LNP-mRNA formulation to the animal model via an appropriate route (e.g., intravenous injection for liver targeting).

- Analysis: Harvest target tissues at specified time points to analyze protein expression and functional efficacy.

Protocol: RNP Delivery via Electroporation

Objective: To achieve high-efficiency gene editing in hard-to-transfect cells, such as stem and primary cells, using pre-assembled Cas9 RNP.

- RNP Complex Assembly: Incubate purified Cas9 protein with synthetic sgRNA at a molar ratio of 1:1.2 to 1:1.5 for 10-20 minutes at room temperature to form the RNP complex.

- Cell Preparation: Harvest and wash the target cells. Resuspend them in an electroporation-compatible buffer.

- Electroporation: Mix the cell suspension with the pre-assembled RNP complexes. Transfer the mixture to an electroporation cuvette and apply an optimized electrical pulse using a nucleofector device.

- Recovery and Culture: Immediately after electroporation, transfer the cells to pre-warmed culture medium and incubate under standard conditions.

- Efficiency Assessment: After 48-72 hours, harvest a sample of cells to assess editing efficiency using methods like T7 Endonuclease I assay or next-generation sequencing.

Signaling Pathways and Experimental Workflows

In Vivo mRNA Therapeutic Pathway

CRISPR-Cas9 Genome Editing Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 1: Essential Reagents for In Vivo mRNA and CRISPR-Cas9 Studies

| Reagent / Material | Function | Key Considerations |

|---|---|---|

| Ionizable Lipids | Core component of LNPs; enables encapsulation and cellular delivery of nucleic acids [26]. | Optimized for endosomal escape and reduced immunogenicity. Critical for in vivo delivery efficiency. |

| CleanCap Analog | Co-transcriptional capping technology for mRNA [31]. | Creates Cap1 structure, enhancing stability and translation efficiency. A key factor in mRNA potency. |

| High-Fidelity Cas9 | Engineered nuclease with reduced off-target effects [29]. | Essential for improving the specificity and safety of CRISPR-based gene editing. |

| Synthetic sgRNA | Guides the Cas nuclease to the specific target DNA sequence [27]. | High purity is critical for performance. Can be used with DNA, mRNA, or as part of an RNP complex. |

| Selective Organ Targeting (SORT) Molecules | Engineered molecules added to LNPs to direct them to specific tissues beyond the liver [27]. | Enables targeted in vivo delivery to organs like the lungs and spleen. |

| Codon-Optimized Cas9 mRNA | mRNA sequence engineered for high expression in the target organism [29]. | Improves protein yield and editing efficiency by matching the host's tRNA abundance. |

Core Experimental Protocols for Ligand-Functionalized Fe₃O₄ NPs

The synthesis and functionalization of Iron Oxide Nanoparticles (IONPs) are critical first steps in developing targeted nanomedicine. The table below summarizes the fundamental methodologies.

Table 1: Core Synthesis Methods for Fe₃O₄ Nanoparticles [32] [33]

| Method Name | Key Principle | Advantages | Disadvantages | Key Influencing Factors |

|---|---|---|---|---|

| Co-precipitation | Precipitation of Fe²⁺ and Fe³⁺ ions in a basic aqueous solution [33]. | Simple procedure, high yield, good hydrophilicity [33]. | Broad size distribution (polydispersity) [33]. | Temperature, pH, ionic strength, and type of iron salts used [33]. |

| Thermal Decomposition | High-temperature decomposition of organometallic precursors (e.g., iron acetylacetonate) in organic solvents [32] [33]. | Excellent monodispersity and high crystallinity; precise size control [33]. | Hydrophobic product often requires subsequent surface modification for biological use [33]. | Heating rate, reaction temperature, and duration [33]. |

| Solvothermal/Hydrothermal | Reaction in a sealed vessel at high temperature and pressure [32] [33]. | High product crystallinity, good hydrophilicity, no need for post-synthesis calcination [33]. | High equipment cost; stringent requirements for temperature, pressure, and vessel integrity [33]. | Solvent type, reaction time, and temperature [33]. |

| Microemulsion | Confinement of co-precipitation reaction within nanoscale water droplets of a water-in-oil microemulsion [33]. | Good control over particle size and monodispersity [33]. | Low yield; requires large amounts of surfactant, which can be toxic and difficult to remove [33]. | Type and concentration of surfactant, reaction temperature, and time [33]. |

Detailed Protocol: Ligand Conjugation via Carbodiimide Coupling

A common method to functionalize IONPs with targeting ligands (e.g., antibodies, folic acid) is the carbodiimide coupling reaction, which links carboxyl (-COOH) and amine (-NH₂) groups.

Materials:

- Reagents: Fe₃O₄ NPs with carboxylated surface (e.g., coated with citric acid, DMSA), N-(3-Dimethylaminopropyl)-N′-ethylcarbodiimide (EDC), N-Hydroxysuccinimide (NHS), targeting ligand (e.g., Folic Acid, Anti-EGFR antibody), reaction buffer (e.g., MES, PBS, pH ~6.0 for EDC activation).

- Equipment: Microcentrifuge, vortex mixer, orbital shaker, dialysis tubing or magnetic separation columns, UV-Vis spectrophotometer.

Procedure:

- Activation of Carboxyl Groups: Disperse 1 mg of carboxylated Fe₃O₄ NPs in 1 mL of reaction buffer. Add a fresh-prepared solution of EDC (typical final concentration 2-10 mM) and NHS (typical final concentration 5-25 mM) to the NP suspension. Vortex and incubate for 15-30 minutes on an orbital shaker at room temperature to form an active NHS ester intermediate [34].

- Ligand Conjugation: Add the targeting ligand (e.g., Folic Acid, antibody) to the activated NP suspension. The ligand concentration must be optimized based on the available activation sites and the desired surface density. Incubate the reaction mixture for 2-4 hours at room temperature or overnight at 4°C with gentle shaking.

- Purification: Separate the conjugated NPs (F-Fe₃O₄ NPs) from unreacted reagents and ligand by-products. This can be achieved via:

- Magnetic Separation: Place the tube on a strong magnet for several minutes. Discard the supernatant and resuspend the pellet in a clean buffer (e.g., PBS). Repeat 3-4 times.

- Dialysis: Dialyze the suspension against a large volume of buffer for 24-48 hours, changing the buffer periodically.

- Centrifugation: Centrifuge at high speed (e.g., 14,000 rpm for 20 min) and wash the pellet.

- Characterization: Verify successful conjugation using techniques such as:

- Fourier-Transform Infrared Spectroscopy (FTIR) to detect new chemical bonds (e.g., amide I band at ~1650 cm⁻¹).

- UV-Vis Spectroscopy to confirm the presence of the ligand via its characteristic absorption peak.

- Zeta Potential measurement to observe a change in surface charge post-conjugation.

Troubleshooting Guide for Common Experimental Challenges

Table 2: Troubleshooting Common Issues with Functionalized IONPs

| Problem | Potential Causes | Solutions & Recommendations |

|---|---|---|

| Nanoparticle Aggregation | High surface energy of naked IONPs; insufficient surface coating; oxidation of Fe₃O₄ to Fe₂O₃ [32] [35]. | - Synthesize NPs with a stabilizing coating (e.g., polymers, silica) from the start [32].- Functionalize with PEG or other hydrophilic polymers to improve dispersibility and stability [32] [36].- Store NPs in an inert atmosphere or under vacuum. |

| Poor Drug Loading or Premature Release | Incorrect drug-to-carrier ratio; weak interaction between drug and NP; coating is too dense or impermeable [32]. | - Optimize the drug loading protocol (incubation time, concentration, pH) [32].- Select a coating material that has high affinity for the drug (e.g., electrostatic, hydrophobic) [32].- Use a stimuli-responsive coating (e.g., pH-sensitive polymer like chitosan) for controlled release at the target site [32]. |

| Low Targeting Specificity In Vivo | Protein corona formation masking the ligand; insufficient ligand density on NP surface; rapid clearance by the immune system (RES) [34] [35]. | - Increase ligand density on the NP surface through optimized conjugation chemistry [34].- Employ a PEGylated ("stealth") coating to reduce opsonization and prolong circulation time, improving chances of reaching the target [34] [36].- Use smaller antibody fragments (e.g., scFv) instead of full antibodies to minimize steric hindrance [34]. |

| High Non-Specific Cellular Uptake | Non-specific electrostatic interactions between charged NPs and cell membranes; incomplete blocking of unreacted sites on NP surface after conjugation. | - After ligand conjugation, "block" unreacted active sites with a small, inert molecule (e.g., ethanolamine for EDC/NHS).- Modify the surface to be near-neutral charge to reduce non-specific binding. |

| Loss of Magnetic Properties | Oxidation of the magnetic core (Fe₃O₄ to γ-Fe₂O₃ and eventually α-Fe₂O₃) [32]. | - Ensure a robust, dense coating that protects the core from the environment [32].- Synthesize NPs with a higher degree of crystallinity (e.g., via thermal decomposition) [32].- Store NPs in anoxic conditions. |

Frequently Asked Questions (FAQs)

Q1: What is the difference between passive and active targeting in nanomedicine?

- Passive Targeting relies on the Enhanced Permeability and Retention (EPR) effect, where nanoparticles (typically < 200 nm) accumulate in tumor tissue due to its leaky vasculature and poor lymphatic drainage [34]. This is a non-specific process.

- Active Targeting involves conjugating specific ligands (e.g., folic acid, antibodies, peptides) to the nanoparticle surface. These ligands bind to receptors that are overexpressed on target cells (e.g., cancer cells), facilitating receptor-mediated endocytosis and increasing specific cellular uptake [34] [35].

Q2: My functionalized NPs work well in vitro, but their performance drops significantly in vivo. Why? This is a common challenge due to the vastly more complex in vivo environment. Key reasons include:

- Protein Corona: Upon injection, proteins rapidly adsorb onto the NP surface, forming a "corona" that can mask the targeting ligand and alter the NP's biological identity [35].

- Rapid Clearance: The immune system's reticuloendothelial system (RES), primarily in the liver and spleen, can quickly clear NPs from circulation. While a PEG coating can help, it can also reduce cell interactions if not properly optimized [34] [36].

- Biological Barriers: Physical and physiological barriers, such as the blood-brain barrier (BBB) or high interstitial fluid pressure in tumors, can prevent NPs from reaching their intended target site [34].

Q3: Which characterization techniques are essential for validating my F-Fe₃O₄ NPs before biological experiments? A multi-technique approach is crucial:

- Size & Dispersion: Dynamic Light Scattering (DLS) for hydrodynamic size and polydispersity index (PDI); Transmission Electron Microscopy (TEM) for core size and morphology [35].

- Surface Charge: Zeta Potential to confirm successful coating and conjugation (shifts in value are expected) and to assess colloidal stability [35].

- Chemical Composition: FTIR Spectroscopy to verify the presence of coating and ligand functional groups; X-ray Photoelectron Spectroscopy (XPS) for elemental surface analysis [37].

- Magnetic Properties: Vibrating Sample Magnetometry (VSM) to confirm superparamagnetic behavior and measure saturation magnetization [35].

Q4: How can I assess the specificity and efficacy of my targeted NPs in vitro?

- Cellular Uptake Studies: Use flow cytometry or confocal microscopy (e.g., with a fluorescently tagged drug or NP) to compare uptake in target vs. non-target cells. A key control is a competitive inhibition assay, where an excess of free ligand is added to block receptors and should significantly reduce NP uptake [34].

- Cytotoxicity Assays: Perform MTT or MTS assays to compare the cytotoxicity of drug-loaded targeted NPs vs. non-targeted NPs. Targeted NPs should show significantly higher cytotoxicity in receptor-positive cells but not in receptor-negative cells [32] [33].

Workflow and Mechanism Visualization

Diagram 1: F-Fe₃O₄ NP Synthesis Workflow

Diagram 2: Active Targeting and Intracellular Drug Release Mechanism

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for F-Fe₃O₄ NP Research

| Reagent/Material | Function/Purpose | Key Considerations |

|---|---|---|

| Iron Precursors (e.g., FeCl₃·6H₂O, Fe(acac)₃) | Source of Fe²⁺ and Fe³⁺ for forming the magnetic Fe₃O₄ crystal core [32] [33]. | Purity impacts NP quality. Choice depends on synthesis method (e.g., chlorides for co-precipitation, acetylacetonate for thermal decomposition). |

| Co-Precipitation Agent (e.g., NH₄OH, NaOH) | Provides alkaline conditions necessary for the precipitation of Fe₃O₄ from iron salts in aqueous solution [32]. | Concentration and addition rate control nucleation and growth, affecting final particle size and distribution. |

| Stabilizing Coatings (e.g., Citric Acid, DMSA, PEG, SiO₂, Dextran) | Prevents NP aggregation, provides colloidal stability, and offers functional groups (-COOH, -NH₂) for further conjugation [32] [35] [37]. | Choice dictates hydrophilicity, biocompatibility, and available chemistry for ligand attachment. PEG coatings reduce immune clearance in vivo [36]. |

| Coupling Agents (e.g., EDC, NHS) | Facilitates covalent conjugation between carboxyl groups on the NP and amine groups on the targeting ligand (carbodiimide chemistry) [34]. | Must be used in fresh solutions. Molar ratios and reaction time must be optimized for each ligand to maximize conjugation efficiency. |

| Targeting Ligands (e.g., Folic Acid, Anti-EGFR Antibodies, RGD Peptides, Transferrin) | Confers active targeting specificity by binding to receptors overexpressed on target cells (e.g., cancer cells) [32] [34]. | Size (small molecule vs. antibody) affects density and orientation on NP surface. Binding affinity and receptor copy number on target cells are critical for success. |

| Model/Therapeutic Drugs (e.g., Doxorubicin, Cisplatin, Curcumin) | The active pharmaceutical ingredient to be delivered to the target site [32] [33]. | Drug loading capacity and release kinetics (e.g., pH-triggered) are key performance metrics to optimize. |

Technical Support Center

Troubleshooting Guides & FAQs

This technical support center addresses common challenges researchers face when implementing digital phenotyping technologies in preclinical and clinical research. These solutions are framed within the broader thesis of overcoming tool limitations for in vivo chain studies.

FAQ: System Performance & Data Collection

Q1: Our smartphone-based digital phenotyping study is experiencing rapid battery drain, disrupting data collection. What are the primary causes and solutions?

Battery drainage is a frequently reported technical hurdle in digital phenotyping studies [38]. The table below summarizes the main causes and recommended mitigation strategies.

| Cause of Battery Drain | Description | Recommended Solution |

|---|---|---|

| High-Power Sensor Usage | GPS tracking and continuous heart rate monitoring are significant power consumers [38]. | Implement adaptive sampling to adjust sensor frequency based on user activity [38]. |

| Continuous Data Transmission | Constant wireless transmission of data to servers depletes battery life [38]. | Utilize sensor duty cycling, which alternates between low-power and high-power sensors [38]. |

| Weak GPS Signal | Operating in areas with poor signal strength can increase battery consumption up to 38% [38]. | Program the app to use lower-power location services like Wi-Fi or cell tower triangulation when GPS fidelity is less critical. |

| Operating System & Hardware | Different devices and OS versions have varying power management efficiencies [38]. | Standardize devices where possible and select models known for strong battery performance in research settings. |

Q2: We are encountering inconsistent data when using different smartphone brands and operating systems in our study. How can we improve cross-device reliability?

Device heterogeneity is a major challenge to data standardization [38]. Inconsistencies arise from varying hardware configurations and software ecosystems.

- Development Strategy: For research-grade data, native app development (building separate apps for iOS and Android) is recommended over cross-platform frameworks. Native development allows for deeper integration with system-level features and optimized performance for sensor-based data collection [38].

- Data Handling Caution: Be aware that data extracted from platform APIs (e.g., Apple HealthKit, Google Fit) are often pre-processed. Changes in the platform's algorithms can lead to discrepancies in data over time, meaning these data are not truly "raw" [38].

- Standardization Effort: Promote interoperability by using and developing open-source frameworks and standardized Application Programming Interfaces (APIs) to facilitate seamless data integration [38].

Q3: How can we track individual animals in a group-housed home-cage setting without using intrusive methods?

This is a common limitation of simple video-tracking systems. The preferred solution is to use Radio-Frequency Identification (RFID) technology [39] [40] [41].

- Implementation: Miniature, implantable RFID microchips are inserted in each animal. A reader plate placed under the home cage continuously scans for these chips [40].

- Data Output: The system can automatically collect data on individual animal location, identity, and physiology (e.g., body temperature) in real-time, even for group-housed mice in their home cage [40] [42]. This eliminates the need for human intervention and reduces stress on the animals.

FAQ: Experimental Design & Data Integrity

Q4: What are the key advantages of Home-Cage Monitoring (HCM) over traditional behavioral tests for in vivo studies?

HCM addresses several core limitations of conventional out-of-cage testing, directly enhancing the validity of in vivo chains of evidence.

| Advantage | Description | Impact on Research |

|---|---|---|

| Reduced Novelty-Induced Stress | Animals are tested in their familiar environment, minimizing a major confounding variable [39] [41]. | Increases data quality and ethological relevance. |

| Longitudinal & Circadian Data | Enables continuous, 24/7 monitoring over days or weeks, capturing natural activity patterns during both light and dark phases [39] [40] [41]. | Reveals progressive changes and circadian rhythms missed by snapshot tests. |

| Minimized Human Interference | Automated data collection reduces experimenter bias and handling stress [39] [40]. | Improves reproducibility and animal welfare. |

| Rich, Unbiased Data | Provides large, continuous datasets on spontaneous behavior, such as locomotor activity, feeding, and social interactions [39] [41]. | Facilitates the discovery of subtle digital biomarkers. |

Q5: For home-cage monitoring, what is considered a sufficient acclimation period before starting data collection?

While there is no universal standard, the definition of a "home-cage" itself implies the animal is in a familiar environment. However, after transferring animals to a specialized monitoring cage, an acclimation period is crucial.

- Evidence-Based Guidance: One study using PhenoTyper cages for socially-isolated mice observed behavioral drifts over an 8-day period, with daily distances gradually declining and sleep increasing [39]. This suggests that acclimation should cover at least one full light/dark cycle (24 hours), and preferably several days, to allow behavior to stabilize before experimental interventions [39].

- Best Practice: Include the planned acclimation period in the experimental protocol and baseline behavior during this phase to confirm stability before proceeding.

Q6: How do we establish "ground truth" to validate the digital phenotypes we identify from passive sensor data?

This is a critical step for ensuring the biological relevance of your findings.

- Methodology: The primary method is the use of Ecological Momentary Assessments (EMAs) [43]. These are brief, in-the-moment surveys delivered via the smartphone app to collect self-report data on daily behaviors and states.

- Application: In a study on substance use, participants would self-report instances of use. This data is then used to "train" the algorithm to detect future substance use events based on the passive digital phenotyping data (e.g., GPS location, keyboard activity) [43].

- Additional Strategies: Collect key ground-truth information during enrollment (e.g., home address, frequently visited locations) to contextualize GPS data. Automated annotation using ingestible sensors or geofenced locations can also provide objective ground truth [43].

Experimental Protocols & Workflows

Detailed Methodology: Home-Cage Monitoring of Rodent Behavior

This protocol outlines the setup and operation for automated, long-term behavioral phenotyping in a home-cage environment, leveraging systems like the Noldus PhenoTyper or RFID-based platforms [39] [40].

1. Experimental Setup:

- Home Cage Configuration: Use a cage furnished with familiar bedding, chow, and water identical to vivarium conditions. Include environmental enrichment (e.g., nesting material, a shelter) to allow natural behaviors [39].

- System Calibration: For video tracking, maximize contrast between the animal and bedding. Calibrate the tracking software (e.g., EthoVision XT) for the specific cage size and lighting conditions [39].

- Animal Identification: For group-housed studies, employ an RFID system. Subcutaneously implant a miniature RFID microchip in each animal prior to the start of the experiment [40].

2. Data Acquisition:

- Initiate Monitoring: Place the animals in the prepared home cage and position the reader plate (for RFID) or ensure the overhead camera is correctly focused.

- Duration: Conduct recordings for a minimum of 3-4 days to capture multiple complete light/dark cycles and allow for behavioral stabilization [39]. Longer periods (weeks to months) are feasible for longitudinal studies.

- Parameters: Continuously record data for locomotor activity (e.g., total distance moved), time spent in different zones, feeding/drinking behavior, body temperature, and social proximity [39] [40] [41].

3. Data Analysis:

- Data Segmentation: Filter and segment the continuous data stream into relevant epochs (e.g., 12-hour light and dark phases) [39].

- Digital Biomarker Extraction: Analyze the data to extract relevant biomarkers. This may include circadian rhythm analysis, quantification of behavioral states (active vs. resting), and detection of changes in patterns over time [39].

- Validation: Correlate findings from home-cage monitoring with outcomes from traditional behavioral tests or pharmacological interventions to validate the digital biomarkers [39].

Workflow Diagram: Home-Cage Monitoring Data Pipeline

The diagram below illustrates the logical flow of data from acquisition to analysis in a home-cage monitoring study.

The Scientist's Toolkit: Essential Research Reagents & Materials

The following table details key technologies and their functions in the field of digital phenotyping and home-cage monitoring.

| Item Name | Function / Application | Key Considerations |

|---|---|---|

| PhenoTyper / EthoVision XT [39] | Integrated home-cage and video-tracking system for automated, longitudinal behavior analysis of rodents. | Optimized for detailed locomotor and behavioral analysis; can be combined with biotelemetry and optogenetics [39]. |

| RFID Microchips & Mouse Matrix [40] [42] | Enables automatic, continuous monitoring of individual temperature, location, and activity in group-housed mice. | Essential for individual identification in social housing; provides reliable temperature data with high accuracy (±0.1°C) [40]. |

| Beiwe Research Platform [44] | Open-source platform for smartphone-based digital phenotyping, collecting raw sensor and phone-usage data. | Collects research-grade raw data for high flexibility in analysis; supports both iOS and Android via native apps [44]. |

| Polar H10 Chest Strap [38] | Wearable device for collecting accurate heart rate and heart rate variability (HRV) data. | Known for excellent data accuracy and battery life (up to 400 hours), suitable for physiological monitoring [38]. |

| ActiGraph GT9X [38] | Wearable inertial measurement unit (IMU) for reliable monitoring of physical activity and sleep. | Offers long-term battery support suitable for week-long recordings of movement data [38]. |

Integrating Network Pharmacology with In Vivo Validation for Complex Mechanisms

Frequently Asked Questions (FAQs) and Troubleshooting Guide

This guide addresses common challenges researchers face when integrating network pharmacology with in vivo studies, providing practical solutions to bridge computational predictions with experimental validation.

FAQ 1: How can I improve the predictive accuracy of my network pharmacology model to ensure more relevant in vivo outcomes?

- Challenge: The biological relevance of network pharmacology predictions is limited by database quality and algorithmic selection, leading to poor translation to in vivo models [45].

- Solution: Implement a multi-database strategy and leverage updated bioinformatics tools.

- Actionable Steps:

- Cross-reference multiple databases to gather compound and target information, such as TCMSP, HERB, and HIT, rather than relying on a single source [45].

- Utilize specialized databases like DisGeNET and GeneCards for comprehensive disease-target associations [46] [47].

- Apply the "Guidelines for Evaluation Methods in Network Pharmacology" to standardize your methodology and increase the reliability of your results [45].

- Troubleshooting Tip: If in vivo validation consistently fails for predicted targets, re-audit your database sources and filtering criteria for false positives.

- Actionable Steps:

FAQ 2: What strategies can bridge the translational gap between in silico predictions and in vivo validation?

- Challenge: A significant gap exists between pathway predictions from computational models and physiological responses in living organisms [48].

- Solution: Employ rigorous molecular docking and pilot studies before full-scale in vivo experiments.

- Actionable Steps:

- Validate binding affinity computationally using molecular docking tools (e.g., AutoDock Vina) against your hub targets before proceeding to animal studies [46] [47].

- Conduct a pilot in vivo study with a small group of animals to test the feasibility of your key hypotheses related to pathway modulation (e.g., JAK2/STAT3 or AKT1 signaling) [47].

- Troubleshooting Tip: If a compound shows high binding affinity in docking but no effect in vivo, investigate its bioavailability and metabolic stability.

- Actionable Steps:

FAQ 3: How do I handle multi-compound, multi-target mechanisms in a controlled in vivo setting?

- Challenge: Traditional "one drug–one target" models are inadequate for validating the synergistic, multi-target mechanisms of action typical of natural products like traditional Chinese medicine formulas [45].

- Solution: Design in vivo experiments that measure multiple endpoints across different signaling pathways.

- Actionable Steps:

- Base your experimental design on KEGG pathway enrichment analysis. For example, if your network analysis highlights TNF and apoptosis pathways, plan to measure related cytokines and proteins (e.g., IL-6, TNF-α, AKT1) [46].

- Measure a panel of biomarkers—including inflammatory cytokines, oxidative stress markers, and key phosphorylated proteins—to capture the holistic effect [46] [47].

- Troubleshooting Tip: When a multi-herbal formula shows efficacy, use fractionation and compound-specific knock-down studies in your in vivo model to identify the core active components.

- Actionable Steps:

FAQ 4: My in vitro cell-based models fail to predict in vivo toxicity. How can I improve model reliability?

- Challenge: Simplified in vitro models often lose vital characteristics of the target organ, leading to inaccurate predictions of drug toxicity in vivo [48].

- Solution: Upgrade in vitro models to better mimic human physiology.

- Actionable Steps:

- Incorporate diverse cell types representative of the target organ (e.g., including both hepatocytes and fibroblasts for liver models) instead of using a single cell line [48].

- Adopt advanced models like 3D human tissues or organ-on-a-chip (OoC) technologies that more accurately replicate human organ physiology and interactions [48] [49].