Evaluating Database Search Algorithms for Ubiquitination Site Prediction: A Comprehensive Guide for Biomedical Researchers

This article provides a systematic evaluation of database search algorithms for ubiquitination site identification, addressing both computational prediction tools and mass spectrometry-based methods.

Evaluating Database Search Algorithms for Ubiquitination Site Prediction: A Comprehensive Guide for Biomedical Researchers

Abstract

This article provides a systematic evaluation of database search algorithms for ubiquitination site identification, addressing both computational prediction tools and mass spectrometry-based methods. We explore foundational biological concepts of ubiquitination and examine diverse algorithmic approaches including traditional machine learning, deep learning architectures, and advanced mass spectrometry techniques like DIA-MS. The content covers practical implementation strategies, troubleshooting common challenges with imbalanced data and experimental workflows, and rigorous validation methodologies. Aimed at researchers, scientists, and drug development professionals, this resource offers critical insights for selecting, optimizing, and validating ubiquitination site detection methods to advance understanding of cellular regulation and therapeutic development.

Ubiquitination Biology and Computational Prediction Fundamentals

The Biological Significance of Ubiquitination in Cellular Regulation and Disease

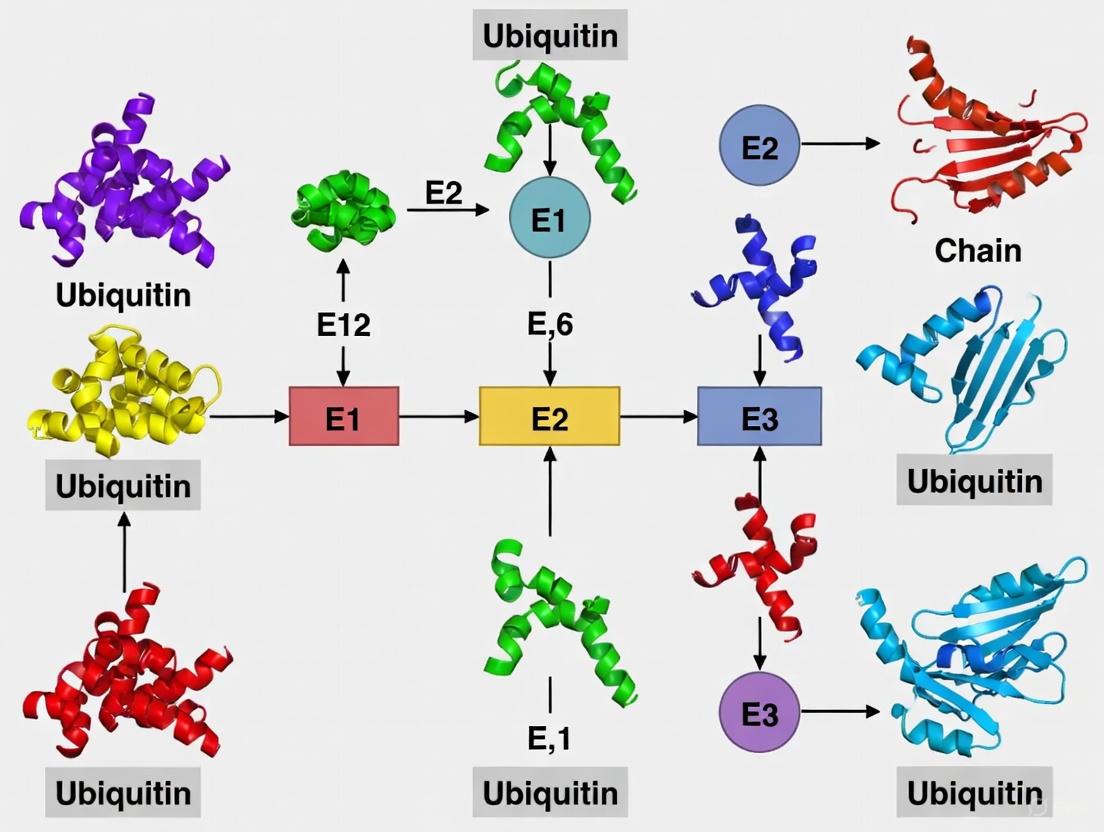

Ubiquitination is a reversible post-translational modification (PTM) characterized by the covalent attachment of ubiquitin, a 76-amino acid protein, to lysine (K) residues on target proteins [1] [2]. This highly conserved process requires a sequential enzymatic cascade involving E1 (activation), E2 (conjugation), and E3 (ligation) enzymes that ultimately attach the C-terminal glycine of ubiquitin to the ε-amino group of substrate lysines [2]. The resulting modification serves as a versatile regulatory signal that extends far beyond its initial recognition as a mere marker for proteasomal degradation. The biological significance of ubiquitination spans virtually all eukaryotic cellular processes, including DNA repair, cell cycle control, transcriptional regulation, signal transduction, endocytosis, and immune response [1] [2] [3].

The critical importance of ubiquitination in maintaining cellular homeostasis becomes strikingly evident when this system becomes dysregulated. Abnormal ubiquitination has been implicated in numerous pathological conditions, including cancer, autoimmune disorders, inflammatory diseases, diabetes, and neurodegenerative conditions such as Alzheimer's and Parkinson's disease [2] [4]. The ubiquitin-proteasome system regulates the stability of key regulatory proteins including tumor suppressors, oncoproteins, and cell cycle regulators, making it a crucial focus for therapeutic development [5] [3]. Consequently, comprehensive understanding and accurate identification of ubiquitination sites have become essential objectives in biomedical research, driving the development of both experimental and computational approaches for ubiquitination site detection.

Experimental Methods for Ubiquitination Site Detection

Mass Spectrometry-Based Approaches

Mass spectrometry (MS) has emerged as the predominant experimental method for large-scale identification, mapping, and quantification of ubiquitination sites [2]. The typical MS-based workflow involves several critical steps: (1) enrichment of ubiquitinated peptides using affinity reagents such as specific antibodies or ubiquitin-binding domains; (2) proteolytic digestion (usually with trypsin or Glu-C); (3) liquid chromatography separation; (4) tandem mass spectrometry analysis; and (5) computational analysis of resulting spectra for site identification [6] [3].

Recent methodological advances have significantly improved the sensitivity and specificity of ubiquitination site detection. One notable innovation involves the use of engineered protein affinity reagents, such as the GST-qUBA reagent consisting of four tandem repeats of ubiquitin-associated domain from UBQLN1 fused to a GST tag [6]. This approach enabled the isolation of polyubiquitinated proteins and identification of 294 endogenous ubiquitination sites on 223 proteins from human 293T cells without requiring proteasome inhibitors or ubiquitin overexpression [6]. Interestingly, mitochondrial proteins constituted 14.7% of the identified sites, implicating ubiquitination in a wide range of previously underappreciated mitochondrial functions [6].

Despite its power, MS-based identification faces several challenges, including the rapid turnover of ubiquitinated proteins, the large size of the ubiquitin modifier, and the dynamic nature of the modification itself [3]. Furthermore, these experimental approaches remain expensive, time-consuming, and labor-intensive, prompting the development of complementary computational methods for large-scale ubiquitination site prediction [1] [2].

Table 1: Essential Research Reagents for Ubiquitination Studies

| Reagent Type | Specific Examples | Function/Application |

|---|---|---|

| Affinity Reagents | GST-qUBA, Ubiquitin-binding domains (UBA, UIM, UBAN) | Enrichment of ubiquitinated proteins/peptides for mass spectrometry |

| Enzymes | E1 activating enzymes, E2 conjugating enzymes, E3 ligases, Deubiquitinases (DUBs) | Studying ubiquitination machinery and reversal mechanisms |

| Cell Lines | HEK293T, Yeast mutant strains (CDC34tm, grr1Δ) | Model systems for studying ubiquitination pathways |

| Protease Inhibitors | Proteasome inhibitors (MG132, Bortezomib) | Stabilization of ubiquitinated proteins by blocking degradation |

| Specific Antibodies | Anti-ubiquitin, Anti-diGly remnant antibodies | Immunoprecipitation and detection of ubiquitinated proteins |

| Database Resources | PLMD, mUbiSiDa, dbPTM, UniProt | Curated repositories of experimentally validated ubiquitination sites |

Figure 1: Ubiquitination Enzymatic Cascade. The three-step enzymatic process of ubiquitin attachment involving E1, E2, and E3 enzymes.

Computational Prediction of Ubiquitination Sites

Evolution of Prediction Methodologies

The limitations of experimental approaches for ubiquitination site identification have stimulated the development of numerous computational prediction tools. These methods have evolved substantially from early feature-based machine learning approaches to contemporary deep learning architectures. Initial methods primarily relied on manually crafted features such as amino acid composition (AAC), position-specific scoring matrices (PSSM), physico-chemical properties (PCPs), and composition of k-spaced amino acid pairs (CKSAAP) combined with classifiers like Support Vector Machines (SVM) and Random Forests [1] [2] [7].

Notable early tools included UbPred, which utilized random forest classifiers and achieved 72% accuracy with AUC of 0.80 [3], and UbiSite, which fused multiple features into a two-layer SVM model [1]. However, these traditional machine learning approaches often struggled with feature engineering limitations, requiring extensive domain expertise and potentially introducing bias through redundant or incomplete feature representations [1].

The field has subsequently witnessed a significant shift toward deep learning approaches that can automatically learn relevant features from large-scale data. Modern architectures including convolutional neural networks (CNNs), multimodal deep architectures, and capsule networks have demonstrated remarkable improvements in prediction accuracy [1] [2] [4]. For instance, the multimodal deep architecture described in [1] processes raw protein sequences, physico-chemical properties, and evolutionary profiles through separate sub-networks, achieving 66.43% accuracy on the large-scale PLMD database.

Comparative Performance of Prediction Tools

Table 2: Performance Comparison of Ubiquitination Site Prediction Tools

| Tool | Methodology | Accuracy | Sensitivity | Specificity | AUC | MCC |

|---|---|---|---|---|---|---|

| Multimodal Deep Architecture [1] | Multimodal CNN | 66.43% | 66.7% | 66.4% | - | 0.221 |

| UbPred [3] | Random Forest | 72.0% | - | - | 0.80 | - |

| ESA-UbiSite [8] | Evolutionary Screening + SVM | 92.0% | - | - | - | - |

| UbiNets [9] | DenseNet Architecture | 92.0% | - | - | - | - |

| MDCapsUbi [4] | Capsule Network | 91.82% | 91.39% | 92.24% | 0.97 | 0.837 |

| Hybrid DL Model [2] | Deep Learning with Hand-crafted Features | 81.98% | 91.47% | - | - | - |

Recent benchmarking studies have provided valuable insights into the relative performance of different computational approaches. A comprehensive 2023 evaluation comparing ten machine learning-based approaches across three categories (feature-based conventional ML, end-to-end sequence-based DL, and hybrid feature-based DL) revealed that deep learning methods consistently outperformed classical machine learning approaches [2]. The best-performing model achieved a 0.902 F1-score, 0.8198 accuracy, 0.8786 precision, and 0.9147 recall using a hybrid approach that combined raw amino acid sequences with hand-crafted features [2].

Interestingly, this study also discovered a positive correlation between model performance and the length of amino acid fragments used for training, suggesting that utilizing entire protein sequences rather than short windows around candidate sites may yield more accurate predictions [2]. This finding has significant implications for future method development and highlights the importance of considering contextual protein information beyond immediate flanking regions.

Advanced Deep Learning Architectures for Ubiquitination Site Prediction

Multimodal Deep Architecture

The multimodal deep architecture represents a significant advancement in ubiquitination site prediction by addressing three key challenges: limitations of artificially designed features, heterogeneity among different feature types, and unbalanced distribution between positive and negative samples [1]. This approach processes three distinct protein modality representations through specialized sub-networks:

- Raw Sequence Processing: One-hot encoded protein sequence fragments are processed through 1D convolutional neural networks (CNNs) to detect implicit sequence patterns [1].

- Physico-chemical Properties: Thirteen carefully selected physico-chemical properties strongly associated with ubiquitination are analyzed through stacked fully connected layers [1].

- Evolutionary Profiles: Position-specific scoring matrices (PSSM) generated via BLAST searches against Swiss-Prot provide evolutionary constraints and are processed through additional CNN layers [1].

The outputs from these three sub-networks are subsequently merged to build the final prediction model. This architecture demonstrated its effectiveness on the Protein Lysine Modification Database (PLMD), which contains 121,742 ubiquitination sites from 25,103 proteins, making it one of the most comprehensive assessments of computational ubiquitination site prediction to date [1].

Capsule Network-Based Approaches

More recently, capsule networks have emerged as promising alternatives to traditional CNNs for ubiquitination site prediction. The MDCapsUbi model represents a sophisticated implementation of this approach, addressing several limitations of conventional deep learning methods [4]. This architecture consists of three main components:

- Sequence Encoding Module: Intercepts raw protein sequences into fragments and encodes amino acids as numerical vectors [4].

- Multi-dimensional Feature Recognition Module: Identifies hidden features through convolution operations and channel attention mechanisms across both sequence and feature map dimensions [4].

- Capsule Network Module: Fuses and refines features from multiple dimensions into capsule vectors that effectively represent hierarchical relationships between low-level and high-level features [4].

A key advantage of capsule networks is their ability to preserve spatial relationships between features through vector-based representations rather than the scalar activations used in traditional CNNs. This enables more effective modeling of complex motifs and patterns associated with ubiquitination sites [4]. The MDCapsUbi model achieved impressive performance metrics with 91.82% accuracy, 91.39% sensitivity, 92.24% specificity, 0.837 MCC, and 0.97 AUC using ten-fold cross-validation [4].

Figure 2: MDCapsUbi Architecture. The capsule network-based model for ubiquitination site prediction incorporating multi-dimensional feature recognition.

Specialized Ubiquitination Databases

The development and validation of computational prediction tools rely heavily on comprehensive, well-curated databases of experimentally verified ubiquitination sites. Several specialized resources have been developed to address this need:

PLMD (Protein Lysine Modification Database): This specialized database contains 20 types of protein lysine modifications, extending from CPLA 1.0 and CPLM 2.0 datasets [1]. The latest version includes 25,103 proteins with 121,742 ubiquitination sites, making it the largest available resource for ubiquitination site prediction [1] [4].

mUbiSiDa (Mammalian Ubiquitination Site Database): This comprehensive resource focuses specifically on mammalian ubiquitination sites, containing approximately 35,494 experimentally validated ubiquitinated proteins with 110,976 ubiquitination sites across five species [5]. Approximately 95% of these sites are from human and mouse, providing a valuable resource for biomedical research [5].

dbPTM: This general PTM database incorporates substantial ubiquitination site information and has been used in several benchmarking studies [2]. The 2019 and 2022 versions have provided standardized datasets for fair comparison of different prediction methods [2].

These databases not only facilitate information retrieval but also enable studies of cross-regulation between different post-translational modifications and investigation of molecular mechanisms underlying protein stability-related cellular processes [5].

Data Processing and Curation

High-quality database construction requires rigorous data processing to ensure reliability and minimize bias. Common procedures include:

- Data Collection: Experimentally identified ubiquitination sites are gathered from published literature through PubMed searches and international databases like UniProt [5].

- Homology Reduction: Tools like CD-HIT are employed to remove similar protein sequences (typically at 40% similarity threshold) to prevent overrepresentation of certain protein families [1] [4].

- Validation: Manual curation eliminates predicted lysine modifications without reference support, ensuring only experimentally verified sites are included [5].

- Dataset Partitioning: Proteins are divided into training and testing datasets via random partition for model construction and evaluation [1].

These meticulous curation processes are essential for developing unbiased predictive models and generating reliable benchmarking datasets for tool comparison.

Implications for Disease Mechanisms and Therapeutic Development

The central role of ubiquitination in cellular regulation directly links its dysregulation to numerous disease pathways. Computational analyses have revealed that proteins involved in specific functional categories display particularly high extents of ubiquitination. In the human proteome, cytoskeletal proteins, cell cycle regulators, and cancer-associated proteins show significantly higher levels of predicted ubiquitination sites compared to proteins from other functional categories [3].

Notably, gain or loss of ubiquitination sites may represent a molecular mechanism underlying numerous disease-associated mutations [3]. For example, aberrant ubiquitination of tumor suppressor proteins or oncoproteins can disrupt normal cellular growth control, contributing to cancer development [5] [3]. In neurodegenerative diseases, impaired ubiquitin-proteasome function leads to abnormal protein accumulation, a hallmark of conditions like Alzheimer's and Parkinson's disease [2] [4].

The improved accuracy of ubiquitination site prediction tools has significant implications for drug development. As the ubiquitin-proteasome system gains recognition as a therapeutic target, computational identification of ubiquitination sites can guide the development of targeted therapies that modulate specific ubiquitination events. Several successful drugs already target this system, including proteasome inhibitors used in cancer treatment, and emerging strategies aim to develop specific E3 ligase inhibitors or activators for more precise therapeutic interventions [4].

The field of ubiquitination site prediction has evolved dramatically from early feature-based machine learning approaches to sophisticated deep learning architectures that automatically extract relevant patterns from large-scale biological data. Current state-of-the-art methods, particularly multimodal deep architectures and capsule networks, have demonstrated remarkable performance improvements, achieving accuracy levels exceeding 90% in some implementations [1] [4].

Future developments will likely focus on several promising directions. Integration of additional contextual information, such as protein structural features and interaction network data, may further enhance prediction accuracy. Species-specific modeling approaches that account for differences in ubiquitination machinery across organisms will improve the relevance of predictions for particular experimental systems [7]. Additionally, the development of explainable AI methods that provide biological insights alongside predictions will increase the utility of these tools for hypothesis generation and experimental design.

As these computational methods continue to mature, they will play an increasingly vital role in bridging the gap between large-scale proteomic data and biological understanding, ultimately accelerating research into the fundamental mechanisms of cellular regulation and disease pathogenesis. The integration of computational predictions with targeted experimental validation represents a powerful strategy for comprehensively mapping the ubiquitin landscape and exploiting this knowledge for therapeutic benefit.

Protein ubiquitination, the process by which a small regulatory protein called ubiquitin is covalently attached to target proteins, represents one of the most important post-translational modifications (PTMs) in eukaryotic cells [10] [11]. This versatile modification regulates diverse fundamental features of protein substrates, including stability, activity, and localization, with dysregulation leading to many pathologies such as cancer and neurodegenerative diseases [11]. The systematic study of ubiquitination has generated massive datasets requiring specialized bioinformatics resources for organization, annotation, and dissemination. Three databases have emerged as cornerstone resources for the ubiquitination research community: dbPTM, PLMD, and PhosphoSitePlus. This comparison guide provides an objective evaluation of these resources within the broader context of evaluating different database search algorithms for ubiquitination site research, enabling researchers to select the most appropriate tools for their specific investigative needs.

dbPTM: An Integrated Resource for Multiple PTM Types

The dbPTM database represents a comprehensive resource that integrates experimentally verified PTMs from multiple sources including UniProtKB/Swiss-Prot, PhosphoSitePlus, and manual curation of literature [12]. In its 2022 update, dbPTM accumulated over 2.77 million PTM substrate sites, with more than 2.23 million entries being experimentally verified [12]. While encompassing numerous modification types, its ubiquitination data is substantial, with current statistics showing 456,653 ubiquitination sites in its collection [13]. A key strength of dbPTM is its focus on functional and structural analyses for PTM sites, including information on upstream regulatory proteins and their integration into protein-protein interaction networks [12]. The database also incorporates disease associations based on non-synonymous single nucleotide polymorphisms (nsSNPs) that occur near PTM sites, providing clinical context to the modification data [13].

PLMD: A Specialized Protein Lysine Modification Database

The Protein Lysine Modification Database (PLMD) takes a specialized approach, focusing exclusively on PTMs occurring at lysine residues [14]. This dedicated focus has enabled PLMD to become one of the most comprehensive resources for ubiquitination and other lysine-directed modifications. The database contains 284,780 modification events across 53,501 proteins from 176 eukaryotes and prokaryotes, covering 20 different types of lysine modifications [14]. PLMD is particularly valuable for studying crosstalk between different modification types on the same lysine residues, having identified 65,297 PLM events involved in 90 types of PLM co-occurrences [14]. The database's specialized nature makes it particularly useful for researchers specifically investigating the complex interplay of modifications at lysine residues, which serve as the exclusive attachment points for ubiquitin.

PhosphoSitePlus: A Leading PTM Resource with Extensive Curation

PhosphoSitePlus (PSP) represents one of the most extensive and highly curated resources for PTM information, originally focusing on phosphorylation but subsequently expanding to include ubiquitination, acetylation, and other modifications [15] [16] [17]. Created with grant support from the NIH and curated by Cell Signaling Technology scientists, PSP is uniquely characterized by its manual curation process that has been maintained for over fifteen years, with more than 20,000 articles compiled [17]. This resource contains over 500,000 PTM sites collectively, with phosphorylation, ubiquitylation, and acetylation sites representing over 90% of the modification types [17]. PSP integrates thousands of disease mutations, allowing researchers to analyze intersections between genetic variants and PTM sites [17]. The database also provides information on upstream-downstream relationships and regulatory networks, making it particularly valuable for signaling pathway analysis.

Comparative Analysis of Database Features

Table 1: Core Database Characteristics and Ubiquitination Content

| Feature | dbPTM | PLMD | PhosphoSitePlus |

|---|---|---|---|

| Primary Focus | Comprehensive PTM resource | Exclusive lysine modifications | Multi-PTM with signaling emphasis |

| Total Ubiquitination Sites | 456,653 [13] | 121,742 (in PLMD 3.0) [18] | 18,996 (as of 2011, significant growth since) [16] |

| Data Sources | Public DBs, manual literature curation | Manual literature curation, specialized datasets | Manual LTP curation, HTP MS datasets |

| Species Coverage | Broad, multiple organisms | 176 eukaryotes and prokaryotes [14] | Predominantly mammalian (99.7%) [16] |

| Ubiquitin Linkage Information | Limited | Not specialized | Limited, though some linkage-specific data |

| Regulatory Network Integration | Upstream regulatory proteins, PPI networks [12] | Motif analysis, modification crosstalk [14] | Kinase-substrate relationships, pathway context |

| Disease Association | nsSNP integration [13] | Limited disease focus | Extensive disease mutation integration [17] |

| Update Frequency | Regular updates | Version-based updates | Continuous updates with NIH support |

Table 2: Experimental and Analytical Method Support

| Methodological Aspect | dbPTM | PLMD | PhosphoSitePlus |

|---|---|---|---|

| MS Data Integration | Extensive MS-based proteomics data [12] | LC-MS techniques, pan-antibody data [14] | Extensive HTP MS datasets, LTP validation [16] [17] |

| Antibody-Based Data | Incorporated | Specialized anti-diGly antibody data [14] | Strong antibody validation, commercial links [16] |

| Computational Predictions | Integrated prediction tools | Motif-based analysis [14] | Limited prediction focus |

| Curation Approach | Hybrid: automated + manual | Manual literature curation | Extensive manual curation (>20,000 articles) [17] |

| Tool Integration | PTM prediction resources | Limited tool integration | Sequence logos, Cytoscape plugin, BioPAX [16] |

| Data Export Capabilities | Available | Multiple access options [14] | Extensive download options |

Ubiquitination Site Identification Methods

The databases rely on complementary experimental methodologies for ubiquitination site identification, which influences the nature and quality of their data:

Mass Spectrometry Approaches: All three databases heavily incorporate mass spectrometry data, with particular emphasis on enrichment strategies to overcome the low stoichiometry of ubiquitination. These include antibody-based enrichment using anti-diGly antibodies that recognize the glycine-glycine remnant left on trypsin-digested ubiquitinated peptides [14] [11], ubiquitin tagging approaches expressing epitope-tagged ubiquitin (e.g., His, Strep) for affinity purification [11], and ubiquitin-binding domain (UBD) based methods using tandem-repeated Ub-binding entities for higher affinity capture [11].

Experimental Validation Methods: Traditional biochemical approaches remain important, including immunoblotting with anti-ubiquitin antibodies following lysine-to-arginine mutations to validate specific modification sites [11]. While low-throughput, these methods provide functional validation that complements high-throughput MS identifications.

Experimental Workflow for Ubiquitination Site Detection and Database Integration

Research Applications and Integration with Analysis Tools

Pathway Analysis and Visualization

The integration of ubiquitination data with pathway analysis tools represents a growing area of development. PTMNavigator, recently introduced as part of the ProteomicsDB platform, provides interactive visualization of PTM data within signaling pathways [19]. This tool enables researchers to overlay experimental ubiquitination data onto ~3000 canonical pathways from manually curated databases, allowing for the examination of how ubiquitination events regulate cellular signaling networks [19]. The software automatically runs kinase and pathway enrichment algorithms whose results are directly integrated into the visualization, providing a comprehensive view of the intricate relationship between PTMs and signaling pathways [19].

Computational Prediction of Ubiquitination Sites

Complementing the experimental data within these databases, numerous computational approaches have been developed to predict ubiquitination sites, which can inform subsequent experimental validation:

Machine Learning and Deep Learning Approaches: Recent advances have demonstrated the effectiveness of deep learning architectures for large-scale ubiquitination site prediction. Multimodal deep architectures that integrate raw protein sequence fragments, physico-chemical properties, and position-specific scoring matrices (PSSM) have shown superior performance compared to traditional feature-based methods [18]. Hybrid models using both raw amino acid sequences and hand-crafted features with deep neural networks have achieved performance metrics up to 0.902 F1-score and 0.8198 accuracy [10].

Feature Selection for Prediction: Critical features for successful ubiquitination site prediction include evolutionary information captured in PSSM profiles, physico-chemical properties of amino acids (e.g., isoelectric point, entropy of formation, flexibility parameters), and sequence-based patterns around candidate ubiquitination sites [18] [10]. These computational approaches are particularly valuable for directing experimental resources toward high-probability ubiquitination sites.

Ubiquitination Cascade and Functional Outcomes Annotated in Databases

Table 3: Key Research Reagents and Computational Tools for Ubiquitination Studies

| Resource Type | Specific Examples | Research Application | Database Integration |

|---|---|---|---|

| Linkage-Specific Antibodies | K48-, K63-, M1-linkage specific antibodies [11] | Enrichment and detection of specific ubiquitin chain types | PhosphoSitePlus, PLMD |

| Epitope Tags for Affinity Purification | His, Strep, HA, Flag tags [11] | Purification of ubiquitinated proteins in tagging systems | PLMD, dbPTM |

| Pan-Ubiquitin Antibodies | P4D1, FK1/FK2 antibodies [11] | General detection and enrichment of ubiquitinated proteins | All databases |

| Deubiquitinase Inhibitors | PR-619, P22077 | Stabilizing ubiquitination events by preventing deubiquitination | Limited integration |

| Proteasome Inhibitors | MG132, Bortezomib | Accumulation of polyubiquitinated proteins destined for degradation | Limited integration |

| Computational Prediction Tools | DeepUbiquitylation, UbiPred, iUbiq-Lys [18] [10] | In silico identification of potential ubiquitination sites | dbPTM |

| Pathway Analysis Platforms | PTMNavigator, Cytoscape with PhosphoPath [19] | Contextualizing ubiquitination in signaling networks | PhosphoSitePlus |

The comparative analysis of dbPTM, PLMD, and PhosphoSitePlus reveals complementary strengths that can guide researchers in selecting appropriate databases for specific investigative contexts. dbPTM excels as a comprehensive multi-PTM resource with extensive integration of computationally predicted features and structural analyses. PLMD provides specialized focus on lysine modifications with detailed information on modification crosstalk, making it invaluable for studying the complex interplay at specific lysine residues. PhosphoSitePlus offers unparalleled manual curation depth with strong emphasis on biological context and disease associations.

For researchers designing studies of ubiquitination sites, we recommend a sequential database approach: beginning with PhosphoSitePlus for its curated functional annotations and disease context, expanding to PLMD for detailed analysis of lysine modification crosstalk, and utilizing dbPTM for structural insights and integration with computational prediction tools. The emerging integration of these resources with visualization platforms like PTMNavigator represents a promising direction for contextualizing ubiquitination within broader signaling networks, ultimately accelerating our understanding of this critical regulatory mechanism in health and disease.

Ubiquitination is a crucial post-translational modification (PTM) that involves the covalent attachment of a 76-residue ubiquitin protein to lysine (K) residues on substrate proteins [20]. This modification regulates diverse cellular processes, including targeted protein degradation, subcellular trafficking, and protein-protein interactions [20]. During mass spectrometry (MS) analysis, tryptic digestion of ubiquitinated proteins generates a characteristic di-glycine (K-GG) remnant attached to the modified lysine residue, resulting in a detectable mass shift of +114.0429 Da [20]. The identification of these K-GG modified peptides is essential for understanding ubiquitination's role in various biological processes and disease mechanisms, such as cancer and neurodegeneration [20] [1].

Within the broader context of evaluating database search algorithms for ubiquitination site research, accurate detection methods form the foundational data layer upon which algorithmic performance depends. This guide objectively compares the performance characteristics of K-GG peptide enrichment against alternative methodologies, providing researchers with experimental data to inform their proteomics workflow design.

Fundamental Principles and Methodologies

The Ubiquitination Process and Mass Spectrometry Detection

The ubiquitination cascade involves a sequential enzymatic mechanism: an E1 activating enzyme charges ubiquitin, which is transferred to an E2 conjugating enzyme, and an E3 ligase finally facilitates ubiquitin attachment to the substrate protein [20]. In proteomics analysis, tryptic digestion cleaves proteins after arginine and lysine residues, but when a lysine is modified by ubiquitination, trypsin cannot cleave at that site. Instead, the C-terminal glycine-glycine motif of ubiquitin remains attached to the modified lysine, creating the distinctive K-GG signature that can be identified via mass spectrometry [20].

Mass spectrometry detects ubiquitination sites through several approaches. In MS1 spectra, the K-GG modification produces a characteristic mass shift, while in tandem MS/MS, fragmentation patterns reveal sequence information including the modified residue [20] [21]. Different fragmentation techniques yield distinct fragment patterns: collision-induced dissociation (CID) primarily generates b and y ions, while electron-transfer dissociation (ETD) produces c and z ions and better preserves labile post-translational modifications [21] [22].

K-GG Peptide Immunoaffinity Enrichment Workflow

K-GG peptide immunoaffinity enrichment employs antibodies specifically raised against the di-glycine remnant motif to selectively isolate modified peptides from complex protein digests [20]. The typical workflow begins with protein extraction from biological samples, often using RIPA or Nonidet P-40 buffer systems supplemented with protease inhibitors to preserve modifications [20]. Following extraction, proteins undergo reduction and alkylation to break disulfide bonds and prevent reformation, then tryptic digestion to generate peptides including K-GG modified species [20].

The critical enrichment step involves incubating the peptide mixture with anti-K-GG antibodies conjugated to solid supports. After extensive washing to remove non-specifically bound peptides, the enriched K-GG peptides are eluted for LC-MS/MS analysis [20]. This method has demonstrated capability to identify thousands of ubiquitination sites from just 1 mg of input material, making it exceptionally efficient for global ubiquitinome profiling [20]. Recent advancements include tandem enrichment approaches like SCASP-PTM that enable simultaneous purification of ubiquitinated, phosphorylated, and glycosylated peptides from a single sample without intermediate desalting steps [23].

Performance Comparison of Ubiquitination Site Detection Methods

Experimental Data and Quantitative Performance Metrics

Direct comparison of K-GG peptide immunoaffinity enrichment with alternative methods reveals significant performance differences. In a controlled study using SILAC-labeled lysates, researchers quantitatively compared abundances of individual K-GG peptides from samples prepared in parallel using different methods [20]. The results demonstrated that K-GG peptide immunoaffinity enrichment consistently yielded greater than fourfold higher levels of modified peptides than affinity-purification mass spectrometry (AP-MS) approaches [20].

Table 1: Quantitative Comparison of Ubiquitination Site Detection Methods

| Method | Sensitivity | Specificity | Number of Sites Identified | Starting Material | Key Applications |

|---|---|---|---|---|---|

| K-GG Peptide Immunoaffinity Enrichment | ~66.7% [1] | ~66.4% [1] | >5,000 sites [20] | 1 mg protein [20] | Global ubiquitinome profiling, focused site mapping |

| Protein-Level AP-MS | Lower than K-GG method [20] | Similar to K-GG method | Limited sites per experiment [20] | 10 mg protein [20] | Specific protein complex analysis |

| Computational Prediction | 66.7% [1] | 66.4% [1] | Large-scale in silico prediction [1] | N/A | Pre-screening, hypothesis generation |

| Gel-Based Methods | Variable, often insufficient [20] | High when detected | Limited by sensitivity [20] | Large amounts required [20] | High-abundance substrates |

For specific substrates including HER2, DVL2, and TCRα, K-GG peptide immunoaffinity enrichment consistently revealed additional ubiquitination sites beyond those identified through protein-level AP-MS experiments [20]. This enhanced detection capability provides more comprehensive ubiquitination mapping for individual proteins of interest. The method has proven particularly valuable for characterizing inducible ubiquitination events, such as those affecting multiple members of the T-cell receptor complex under endoplasmic reticulum stress conditions [20].

Technical Advantages and Methodological Limitations

K-GG immunoaffinity enrichment offers several distinct advantages over alternative approaches. The method enables direct identification of modification sites rather than inferring them through mutagenesis, overcoming challenges associated with functional redundancy when preferred lysine sites are mutated [20]. Additionally, the technique requires less starting material than conventional AP-MS approaches—successfully identifying sites from just 1 mg of input material compared to 10 mg typically used for immunoprecipitation-based methods [20].

However, the method does present certain limitations. The requirement for specific high-quality antibodies represents a potential constraint, and the technique may still miss low-abundance ubiquitination events despite its enhanced sensitivity. Furthermore, like other antibody-based methods, it may exhibit sequence context biases where certain K-GG peptide motifs are enriched more efficiently than others. These limitations highlight why multiple complementary approaches continue to be valuable in ubiquitination research.

Table 2: Methodological Characteristics Across Ubiquitination Detection Approaches

| Characteristic | K-GG Peptide Enrichment | Protein-Level AP-MS | Gel-Based Methods | Computational Prediction |

|---|---|---|---|---|

| Site Resolution | Direct identification of modified lysines [20] | Indirect, requires additional MS | Direct identification after gel separation [20] | In silico prediction only [1] |

| Sensitivity | High (4× more than AP-MS) [20] | Moderate | Variable, often limited [20] | Not applicable |

| Throughput | High for global profiling [20] | Lower, target-specific | Low | Very high [1] |

| Resource Requirements | Specialized antibodies, MS instrumentation | Specific antibodies, MS | Standard protein lab equipment | Computational resources |

| Typical Applications | Ubiquitinome profiling, focused site mapping [20] | Specific protein complexes | High-abundance substrates | Pre-screening, large-scale analysis [1] |

Implementation Considerations and Research Applications

The Scientist's Toolkit: Essential Research Reagents

Successful implementation of K-GG enrichment requires specific reagents and optimization at each workflow stage. The following essential materials represent critical components for effective ubiquitination site detection.

Table 3: Essential Research Reagents for K-GG Enrichment Studies

| Reagent Category | Specific Examples | Function and Importance |

|---|---|---|

| Cell Lysis Buffers | RIPA buffer, Nonidet P-40 buffer [20] | Protein extraction while preserving ubiquitination states |

| Protease Inhibitors | EDTA-free protease inhibitor mixtures [20] | Prevent degradation of ubiquitinated proteins during preparation |

| Proteasomal Inhibitors | MG132 [20] | Stabilize ubiquitinated proteins by blocking degradation |

| Enrichment Antibodies | Anti-di-glycine remnant (K-GG) antibodies [20] | Specific isolation of ubiquitinated peptides from complex mixtures |

| Chromatography Media | Protein A/G agarose beads, anti-FLAG M2 beads [20] | Solid supports for immunoaffinity purification |

| Digestion Enzymes | Sequencing-grade trypsin [20] | Generates K-GG modified peptides from ubiquitinated proteins |

| Mass Spec Standards | SILAC-labeled lysates [20] | Enable quantitative comparisons across experimental conditions |

Practical Implementation and Data Analysis

Effective implementation of K-GG enrichment protocols requires attention to several practical considerations. Sample preparation should include proteasomal inhibitors like MG132 to stabilize ubiquitinated proteins, and lysis conditions must balance complete protein extraction with preservation of ubiquitination states [20]. For LC-MS/MS analysis, data-dependent acquisition methods efficiently select intense ions from MS1 for fragmentation, while data-independent acquisition approaches like those mentioned in SCASP-PTM protocols provide complementary coverage [23].

For database searching, algorithms must account for the +114.0429 Da mass shift on modified lysines and account for potential missed cleavages at these sites [20]. The multimodal deep architectures recently developed for computational prediction achieve approximately 66.4% accuracy and 0.221 MCC value, providing potential supplementary approaches to experimental methods [1]. When interpreting results, researchers should consider that K-GG enrichment may capture both conventional ubiquitination and other ubiquitin-like modifications that generate similar di-glycine remnants, necessitating careful validation of important findings through orthogonal methods.

K-GG peptide immunoaffinity enrichment represents a highly effective method for ubiquitination site mapping, offering superior sensitivity and comprehensive coverage compared to protein-level AP-MS and gel-based approaches. The method's ability to identify thousands of modification sites from minimal starting material has significantly advanced large-scale ubiquitinome profiling studies. While computational prediction methods continue to evolve, mass spectrometry-based detection with prior enrichment remains the gold standard for experimental validation of ubiquitination sites.

The selection of appropriate detection methodologies fundamentally influences the quality of data used for evaluating database search algorithms in ubiquitination research. As mass spectrometry technologies advance and enrichment protocols become more refined, the research community can expect increasingly comprehensive ubiquitination site atlases that will further illuminate this critical regulatory mechanism in health and disease.

Ubiquitination, the covalent attachment of a small regulatory protein to substrate proteins, represents a crucial post-translational modification that governs diverse cellular functions including protein degradation, DNA repair, and signal transduction [24] [25]. Traditional experimental methods for ubiquitination site identification—including mass spectrometry (MS), immunoprecipitation (IP), and proximity ligation assay (PLA)—have provided valuable insights but remain costly, time-consuming, and technically challenging [25] [10]. The limitations are particularly evident in detecting low-stoichiometry modifications and characterizing ubiquitin chain architecture, creating a critical need for computational approaches that can complement experimental methods [26] [25].

The evolution of computational prediction tools has progressed through distinct phases: from early feature-based machine learning models to contemporary deep learning frameworks that leverage representation learning and ensemble strategies. This guide provides a systematic comparison of current ubiquitination site prediction tools, evaluating their methodologies, performance metrics, and practical applications for researchers in proteomics and drug development.

Traditional Experimental Methods: Foundations and Limitations

Conventional approaches for ubiquitination characterization rely on biochemical techniques with inherent constraints. Immunoblotting using anti-ubiquitin antibodies (e.g., P4D1, FK1/FK2) enables detection of ubiquitinated substrates but offers low throughput and limited site-specific resolution [25]. MS-based proteomics has emerged as the dominant experimental method, though it requires sophisticated enrichment strategies to overcome sensitivity challenges posed by low ubiquitination stoichiometry [26] [25].

Key enrichment methodologies include:

- Ubiquitin tagging-based approaches: Expression of epitope-tagged ubiquitin (e.g., His, Strep, FLAG) enables affinity purification of ubiquitinated proteins, though this may introduce artifacts from tag interference [25].

- Antibody-based enrichment: Linkage-specific antibodies (e.g., recognizing K48 or K63 chains) allow isolation of particular ubiquitin chain types, but suffer from high cost and potential non-specific binding [25].

- Ubiquitin-binding domain (UBD) tools: Tandem-repeated ubiquitin-binding entities (TUBEs) with enhanced affinity permit enrichment of endogenous ubiquitination without genetic manipulation [25].

Recent quantitative studies reveal that ubiquitination site occupancy spans over four orders of magnitude, with median occupancy approximately three orders of magnitude lower than phosphorylation, explaining why enrichment remains essential for detection [26]. These experimental methods generate the ground-truth data essential for training and validating computational predictors while establishing the performance benchmarks that computational approaches must exceed.

Computational Prediction Approaches: Methodological Evolution

The progression of computational tools for ubiquitination site prediction mirrors broader trends in bioinformatics, transitioning from feature-engineered machine learning to representation learning with deep neural networks.

Traditional Machine Learning Foundations

Early prediction systems relied on manually curated features and conventional classifiers:

- UbiPred: Utilized support vector machines (SVM) with 31 selected physicochemical properties of amino acids [24].

- CKSAAP_UbSite: Implemented SVM with k-spaced amino acid pairs composition [24].

- hCKSAAP_UbSite: Expanded feature space to include protein aggregation tendencies alongside sequence features [24].

These models demonstrated the feasibility of computational prediction but exhibited limited generalizability across species and conditions.

Deep Learning Revolution

Contemporary tools leverage diverse deep learning architectures:

- Ubigo-X: Employs an ensemble approach with three sub-models: Single-Type sequence-based features, k-mer sequence-based features, and structure-based/function-based features. The framework transforms sequence features into image-like representations processed through ResNet34 and combines predictions via weighted voting [24] [27].

- EUP: Leverages the ESM2 protein language model to extract lysine site-dependent features, applies conditional variational autoencoders for dimensionality reduction, and builds downstream predictors on the latent representations for cross-species prediction [28].

- DeepMVP: Incorporates convolutional neural networks (CNNs) and bidirectional gated recurrent units (GRUs) optimized via genetic algorithm, trained on PTMAtlas—a curated compendium of 397,524 PTM sites from systematic reanalysis of 241 public mass spectrometry datasets [29].

- MMUbiPred: Unifies multiple sequence representations including embedding, one-hot, and physicochemical encodings within a single deep learning framework [30].

The table below summarizes the key methodological characteristics of these tools:

Table 1: Methodological Comparison of Ubiquitination Site Prediction Tools

| Tool | Core Algorithm | Feature Engineering | Architecture | Species Focus |

|---|---|---|---|---|

| Ubigo-X | Ensemble Learning | AAC, AAindex, one-hot, k-mer, structural features | ResNet34 + XGBoost + Weighted Voting | Species-neutral |

| EUP | Conditional Variational Autoencoder | ESM2 protein language model embeddings | cVAE + Residual DNN | Multi-species (Animals, Plants, Microbes) |

| DeepMVP | CNN + Bidirectional GRU | Sequence-based features from PTMAtlas | Ensemble CNN-BiGRU | Human and viral proteomes |

| MMUbiPred | Deep Learning | Embedding, one-hot, physicochemical encodings | Unified Deep Network | General, Human-specific, Plant-specific |

Experimental Protocols and Training Methodologies

Understanding the experimental design behind tool development is crucial for appropriate application:

Ubigo-X Training Protocol:

- Data Source: 53,338 ubiquitination and 71,399 non-ubiquitination sites from PLMD 3.0 after CD-HIT filtering (<30% sequence identity) [24]

- Feature Extraction: Sequence-based (AAC, AAindex, one-hot, k-mer), structure-based (secondary structure, solvent accessibility), function-based (signal peptide cleavage sites) [24]

- Model Training: Three separate sub-models with image-transformed features for deep learning components [24]

- Validation: Independent testing on PhosphoSitePlus data (65,421 ubiquitination and 61,222 non-ubiquitination sites) [24]

EUP Development Workflow:

- Data Acquisition: 182,120 ubiquitination sites from CPLM 4.0 across multiple species [28]

- Feature Generation: ESM2 embeddings for lysine sites followed by conditional variational inference for latent space representation [28]

- Data Balancing: Random under-sampling combined with Neighborhood Cleaning Rule for denoising [28]

- Cross-species Validation: Separate evaluation on animals, plants, and microbes [28]

DeepMVP Data Curation:

- MS Data Reanalysis: Systematic reprocessing of 241 public PTM-enriched MS/MS datasets with strict FDR control (<1% at both PSM and site levels) [29]

- PTMAtlas Construction: 397,524 high-confidence PTM sites across six modification types, including 106,777 ubiquitination sites [29]

- Model Optimization: Genetic algorithm for architecture search with ensemble strategy for robustness [29]

Performance Comparison and Benchmarking

Rigorous evaluation across standardized metrics reveals the relative strengths of each approach:

Table 2: Performance Comparison of Ubiquitination Site Prediction Tools

| Tool | AUC | Accuracy | MCC | Testing Dataset | Key Advantage |

|---|---|---|---|---|---|

| Ubigo-X | 0.85 (balanced) 0.94 (imbalanced) | 0.79 (balanced) 0.85 (imbalanced) | 0.58 (balanced) 0.55 (imbalanced) | PhosphoSitePlus (65,421 ubiquitination sites) | Robust to class imbalance |

| EUP | >0.87 (cross-species) | N/R | N/R | Independent test set (1,191 sites) | Cross-species generalization |

| DeepMVP | Substantial improvement over existing tools | N/R | N/R | Literature-curated variants and cancer proteogenomic datasets | Multi-PTM prediction |

| MMUbiPred | 0.87 | N/R | N/R | Independent tests | Unified model for specific taxa |

N/R: Not explicitly reported in the available literature

Performance analysis indicates that ensemble strategies like Ubigo-X demonstrate particular robustness when handling naturally imbalanced data (1:8 positive-to-negative ratio), achieving AUC of 0.94 under such conditions [24]. EUP excels in cross-species prediction, identifying conserved and species-specific ubiquitination patterns across animals, plants, and microbes [28]. DeepMVP establishes new performance standards across six PTM types, benefiting from its high-quality training data from systematic MS reanalysis [29].

Research Reagent Solutions: Experimental and Computational Tools

Table 3: Essential Research Reagents and Resources for Ubiquitination Studies

| Resource | Type | Function | Example Applications |

|---|---|---|---|

| Linkage-specific Antibodies | Experimental Reagent | Enrichment of specific ubiquitin chain types (K48, K63, M1, etc.) | Immunoprecipitation, Western blotting [25] |

| TUBEs (Tandem-repeated Ub-binding Entities) | Experimental Reagent | High-affinity capture of endogenous ubiquitinated proteins | Proteomic analysis without genetic manipulation [25] |

| Epitope-tagged Ubiquitin | Experimental Reagent | Affinity purification of ubiquitinated substrates | His-, Strep-, or FLAG-tagged ubiquitin systems [25] |

| PTMAtlas | Computational Resource | Curated compendium of 397,524 PTM sites from MS reanalysis | Training high-performance predictors [29] |

| CPLM 4.0 / PLMD 3.0 | Data Repository | Experimentally verified ubiquitination sites | Benchmarking computational predictions [24] [28] |

| ESM2 Protein Language Model | Computational Resource | Pre-trained deep learning model for protein sequence representation | Feature extraction for ubiquitination site prediction [28] |

Integration Pathways: Experimental and Computational Workflows

The most powerful applications combine computational prediction with experimental validation through structured workflows:

Diagram 1: Integrated Ubiquitination Site Discovery Workflow

The evolution of ubiquitination site prediction has progressed from rudimentary feature-based classifiers to sophisticated deep learning systems that leverage protein language models and ensemble strategies. Contemporary tools like Ubigo-X, EUP, and DeepMVP demonstrate markedly improved performance across balanced and imbalanced datasets while offering cross-species prediction capabilities.

For researchers selecting appropriate tools, consideration should include:

- Species focus: EUP excels for cross-species applications, while DeepMVP provides exceptional performance on human proteomes

- Data characteristics: Ubigo-X shows particular robustness with imbalanced data distributions

- Interpretability needs: EUP identifies conserved features across evolutionary lineages

- Multi-PTM requirements: DeepMVP simultaneously predicts six modification types

Future development will likely focus on integrating structural predictions, enhancing interpretability, and improving performance on rare ubiquitin chain types. The continued synergy between experimental method development and computational innovation will further accelerate the mapping of the ubiquitin landscape and its therapeutic applications.

Algorithm Architectures and Implementation Strategies

Ubiquitination, the process by which a ubiquitin protein attaches to a lysine residue on a substrate protein, is a fundamental post-translational modification (PTM) with critical roles in cellular regulation, protein degradation, and disease pathogenesis [24] [31]. Experimental identification of ubiquitination sites is resource-intensive, driving the development of computational prediction tools [32] [10]. Among these, traditional machine learning (ML) models remain pivotal for their interpretability, efficiency, and robust performance. This guide objectively compares the performance of three dominant traditional ML algorithms—Random Forest (RF), Support Vector Machine (SVM), and eXtreme Gradient Boosting (XGBoost)—in predicting ubiquitination sites, providing researchers with actionable insights for their computational workflows.

Core Methodologies in Ubiquitination Site Prediction

The predictive accuracy of any ML model hinges on a structured experimental pipeline. The following workflow outlines the standard protocols used in benchmark studies for ubiquitination site prediction.

Data Sourcing and Preprocessing

Benchmark datasets are typically curated from public repositories such as PLMD, dbPTM, and CPLM [24] [28] [10]. A standard preprocessing protocol involves using CD-HIT to remove sequences with >30-40% similarity, reducing homology bias [24] [33]. Positive samples are short sequence fragments (e.g., windows of 27 or 41 amino acids) centered on experimentally verified ubiquitinated lysine residues. Negative samples comprise similar fragments centered on non-ubiquitinated lysines from the same protein sequences, often filtered to avoid high similarity with positive samples [24] [33].

Feature Extraction and Selection

Effective feature engineering is critical. Common feature extraction methods include:

- Sequence-based features: Binary Encoding (BE), Composition of k-spaced Amino Acid Pairs (CKSAAP), and Position Weight Matrix (PWM) [32] [33].

- Evolutionary information: Position-Specific Scoring Matrix (PSSM) [33].

- Physicochemical properties: Hundreds of amino acid indices (AAindex) or a curated set of 31 properties [32] [31]. To mitigate dimensionality and overfitting, feature selection methods like LASSO (Least Absolute Shrinkage and Selection Operator), mRMR (Minimum Redundancy - Maximum Relevance), and Null Importances are employed to identify the most informative feature subset [32] [33].

Model Training and Evaluation Metrics

Models are typically trained using k-fold cross-validation (e.g., 5-fold or 10-fold) to ensure robustness [34]. Performance is evaluated on independent test sets not used during training. Key metrics include:

- AUC (Area Under the ROC Curve): Measures overall ranking performance.

- Accuracy (ACC): Proportion of correct predictions.

- MCC (Matthews Correlation Coefficient): A balanced measure for imbalanced datasets.

- F1-Score: Harmonic mean of precision and recall.

Performance Comparison of RF, SVM, and XGBoost

The following table synthesizes quantitative performance data for RF, SVM, and XGBoost from recent benchmark studies.

Table 1: Comparative Performance of Traditional ML Classifiers for Ubiquitination Site Prediction

| Classifier | Species / Dataset | AUC | Accuracy | MCC | F1-Score | Key Features Used | Source |

|---|---|---|---|---|---|---|---|

| Random Forest (RF) | Homo sapiens | 0.950 | - | 0.781 | - | BE, CKSAAP, EAAC, PWM, AA531, PSSM | [33] |

| Random Forest (RF) | Arabidopsis thaliana | 0.977 | - | 0.827 | - | BE, CKSAAP, EAAC, PWM, AA531, PSSM | [33] |

| XGBoost | Homo sapiens | - | 0.8198 | - | 0.902 | Hybrid (Sequence + Hand-crafted) | [10] |

| Support Vector Machine (SVM) | Multiple Datasets (Set1, Set2, Set3) | 0.9998, 0.8887, 0.8481 | 98.33%, 81.12%, 76.90% | - | - | BE, PseAAC, CKSAAP, PSPM (with LASSO) | [32] |

| SVM | Arabidopsis thaliana | 0.868 | 81.56% | - | - | AAC, CKSAAP | [10] |

Analysis of Comparative Performance

Random Forest (RF) demonstrates top-tier performance, particularly in conjunction with comprehensive feature fusion and selection. The UbNiRF model, which combines RF with the Null Importances feature selection method, achieved exceptionally high MCC scores (0.827 for A. thaliana, 0.781 for H. sapiens), indicating superior balance between sensitivity and specificity on imbalanced data [33]. RF's ensemble nature, which aggregates many decision trees, makes it robust against overfitting and effective at capturing complex feature interactions.

Support Vector Machine (SVM) is a well-established performer in ubiquitination prediction. The UbiSitePred model, which used LASSO for feature selection before SVM classification, reported near-perfect AUC (0.9998) and accuracy (98.33%) on one dataset, showcasing its potential with optimized feature sets [32]. SVM excels in high-dimensional spaces and is particularly effective when a clear margin of separation exists in the data. Its performance can be highly dependent on the kernel choice and feature preprocessing.

eXtreme Gradient Boosting (XGBoost) represents the gradient boosting approach, which builds trees sequentially to correct errors from previous ones. In a broad comparison of ten ML methods for human ubiquitination sites, a hybrid deep learning model utilizing XGBoost-related frameworks achieved an F1-score of 0.902 and an accuracy of 81.98%, highlighting the strength of gradient-boosting-derived architectures [10]. XGBoost is known for its speed, scalability, and high performance, especially on structured data.

Table 2: Key Resources for Ubiquitination Site Prediction Research

| Resource Name | Type | Primary Function in Research | Example/Reference |

|---|---|---|---|

| PLMD / CPLM / dbPTM | Data Repository | Source of experimentally verified ubiquitination sites for model training and testing. | [24] [28] [10] |

| CD-HIT & CD-HIT-2D | Bioinformatics Tool | Reduces sequence redundancy in datasets to prevent model overfitting. | [24] [33] |

| Amino Acid Indices (AAindex) | Feature Database | Provides numerical representations of physicochemical properties for feature extraction. | [24] [31] |

| Position-Specific Scoring Matrix (PSSM) | Evolutionary Feature | Encodes evolutionary conservation information from multiple sequence alignments. | [33] |

| LASSO / mRMR / Null Importances | Feature Selection Algorithm | Identifies optimal, non-redundant feature subsets to improve model performance and interpretability. | [32] [35] [33] |

| SMOTE | Data Sampling Technique | Addresses class imbalance by generating synthetic samples of the minority class (ubiquitinated sites). | [33] |

The evaluation of traditional machine learning approaches reveals a nuanced performance landscape for ubiquitination site prediction. Random Forest consistently achieves high MCC and AUC, establishing it as a robust and reliable choice, particularly when combined with advanced feature selection. Support Vector Machine remains a powerful and often top-performing model, especially with careful feature engineering, as demonstrated by UbiSitePred. XGBoost and related gradient boosting methods show excellent accuracy and F1-scores, making them strong contenders in the ML toolkit. The choice of algorithm is interdependent with feature engineering and data preprocessing strategies. For researchers, this comparative data supports RF and SVM as proven, high-performance solutions for building ubiquitination site predictors, with the selection often boiling down to the specific dataset characteristics and the desired balance between different performance metrics.

Ubiquitination is a crucial post-translational modification (PTM) that regulates diverse cellular functions, including protein degradation, signal transduction, DNA repair, and cell cycle progression [36] [31]. Accurate identification of ubiquitination sites is essential for understanding disease mechanisms and developing therapeutic strategies. While traditional experimental methods for ubiquitination site detection are expensive and time-consuming, deep learning architectures have emerged as powerful computational alternatives, offering unprecedented accuracy and efficiency [24] [10]. This review provides a comprehensive comparison of convolutional neural networks (CNNs), ResNet architectures, and hybrid models for ubiquitination site prediction, evaluating their performance, methodologies, and applicability to different research scenarios.

Core Architectural Components in Ubiquitination Prediction

Convolutional Neural Networks (CNNs) represent a foundational architecture that applies convolutional filters to extract local sequence patterns from protein data. These models excel at identifying position-invariant features in amino acid sequences through their hierarchical structure of convolutional and pooling layers [10]. For ubiquitination site prediction, CNNs typically process sequence embeddings such as one-hot encoding or physicochemical properties to identify motifs around lysine residues.

ResNet (Residual Networks) introduce skip connections that enable the training of substantially deeper networks by mitigating the vanishing gradient problem. In ubiquitination prediction, ResNet architectures allow for more complex feature hierarchies while maintaining training stability [37] [31]. The residual blocks typically incorporate multi-kernel convolutions to capture features at different scales simultaneously, significantly enhancing pattern recognition capabilities.

Hybrid Models combine architectural components from multiple deep learning approaches to leverage their complementary strengths. Common hybridizations include CNN-Bidirectional GRU for spatiotemporal feature extraction [38], CNN-Transformer for integrating local and global sequence contexts [31], and ensemble methods that fuse predictions from multiple specialized sub-models [24]. These architectures demonstrate superior performance by capturing both short-range motifs and long-range dependencies in protein sequences.

Quantitative Performance Comparison

Table 1: Performance comparison of deep learning architectures for ubiquitination site prediction

| Architecture | Model Name | Accuracy | Precision | Recall | AUC | MCC |

|---|---|---|---|---|---|---|

| CNN-Based | DeepUbi [10] | - | - | - | 0.99 | - |

| ResNet-Based | ResUbiNet [36] [31] | 0.819 | 0.879 | 0.915 | 0.902 | - |

| Hybrid | Ubigo-X (Balanced) [24] | 0.79 | - | - | 0.85 | 0.58 |

| Hybrid | Ubigo-X (Imbalanced) [24] | 0.85 | - | - | 0.94 | 0.55 |

| Hybrid | CNN-LSTM (Plants) [39] | 0.81 | - | - | - | - |

Table 2: Architectural components and their functional benefits in ubiquitination prediction

| Component | Function | Advantage |

|---|---|---|

| Multi-Head Attention [31] | Captures long-range dependencies in sequences | Identifies relationships between distant residues |

| Multi-Kernel Convolution [31] | Parallel convolutions with different receptive fields | Extracts motifs of varying lengths simultaneously |

| Squeeze-and-Excitation [31] | Recalibrates channel-wise feature responses | Enhances important features, suppresses less useful ones |

| Residual Connections [37] [31] | Creates skip connections between layers | Enables training of very deep networks |

| Weighted Voting Ensemble [24] | Combines predictions from multiple sub-models | Improves robustness and generalization |

Experimental Protocols and Methodologies

Benchmark Datasets and Preprocessing Standards

High-quality datasets form the foundation for training effective ubiquitination prediction models. The most widely adopted benchmark datasets include experimentally verified ubiquitination sites from UniProt, dbPTM, and PLMD 3.0 [31] [10]. Standard preprocessing involves extracting sequence fragments with the ubiquitinated lysine residue at the center, typically using window sizes of 25-31 amino acids [39] [31]. To ensure model generalization, researchers apply redundancy reduction techniques such as CD-HIT with 30% sequence identity threshold and use CD-HIT-2d to remove negative samples with high similarity to positive samples [24].

Data imbalance presents a significant challenge in ubiquitination prediction, as non-ubiquitinated sites vastly outnumber ubiquitinated sites. Advanced approaches address this through hybrid resampling techniques combining adaptive random undersampling with GAN-based oversampling [38]. Studies have demonstrated that proper handling of class imbalance significantly improves model performance, with Ubigo-X achieving 0.94 AUC on imbalanced test data compared to 0.85 AUC on balanced data [24].

Sequence Representation and Feature Encoding

Effective feature representation is critical for model performance. Modern architectures employ multiple encoding strategies:

Evolutionary features include BLOSUM62 matrices that capture substitution patterns and position-specific scoring matrices (PSSM) derived from multiple sequence alignments [31]. These features provide information about evolutionary constraints on specific sequence positions.

Physicochemical properties from databases like AAindex incorporate biochemical characteristics of amino acids, including hydrophobicity, charge, and structural properties [31]. ResUbiNet utilizes 31 carefully selected AAindex properties that have proven informative for ubiquitination prediction [31].

Embedding-based features represent a paradigm shift in sequence representation. Protein language models like ProtTrans generate context-aware embeddings by pre-training on millions of protein sequences [31]. These embeddings capture complex semantic relationships between amino acids and have demonstrated superior performance compared to traditional encoding schemes.

Innovative representations include the transformation of sequence features into image-like formats, enabling the application of advanced computer vision architectures. Ubigo-X converts AAC, AAindex, and one-hot encodings into 2D representations processed by ResNet34 [24].

Architectural Implementation Details

ResUbiNet exemplifies a modern integrated architecture, processing three parallel input streams: ProtTrans embeddings, AAindex properties, and BLOSUM62 matrices [31]. The model incorporates transformer blocks with multi-head attention to capture long-range dependencies, followed by residual blocks with multi-kernel convolutions to extract features at multiple scales. Squeeze-and-excitation blocks dynamically recalibrate feature importance, and residual connections enable stable training of deep networks [31].

Ubigo-X employs an ensemble strategy with three specialized sub-models: Single-Type sequence-based features (SBF), k-mer sequence-based features (Co-Type SBF), and structure-based and function-based features (S-FBF) [24]. The model combines predictions through weighted voting, with image-transformed sequence features processed by ResNet34 and structural features processed by XGBoost.

CNN-GRU Hybrids for IIoT security applications demonstrate architectural patterns applicable to ubiquitination prediction, featuring convolutional layers for local pattern extraction followed by gated recurrent units (GRUs) for capturing temporal dependencies in sequential data [38]. These architectures have proven particularly effective for handling sequential network traffic data with inherent temporal patterns.

Diagram 1: Architectural comparison of CNN, ResNet, and Hybrid models for ubiquitination site prediction

Research Reagent Solutions and Computational Tools

Table 3: Essential research reagents and computational tools for ubiquitination site prediction

| Tool/Resource | Type | Function | Access |

|---|---|---|---|

| PTMAtlas [29] | Database | Curated compendium of 397,524 PTM sites from systematic MS reprocessing | Publicly available |

| DeepMVP [29] | Software | Deep learning framework for predicting 6 major PTM types including ubiquitination | http://deepmvp.ptmax.org |

| ProtTrans [31] | Embedding | Protein language model generating context-aware sequence representations | Publicly available |

| Ubigo-X [24] | Web Tool | Species-neutral ubiquitination predictor with image-based feature representation | http://merlin.nchu.edu.tw/ubigox/ |

| CD-HIT [24] | Software | Sequence clustering to reduce redundancy in training datasets | Publicly available |

| ResUbiNet [36] [31] | Model | Integrated architecture with ProtTrans, transformer, and residual components | Code not specified |

Diagram 2: Experimental workflow for developing deep learning models in ubiquitination site prediction

The comparative analysis of deep learning architectures for ubiquitination site prediction reveals distinct advantages for different research scenarios. CNN-based models provide a solid foundation for initial investigations, offering interpretable feature learning with relatively low computational requirements. ResNet architectures excel in scenarios requiring deep feature hierarchies and demonstrate superior performance in capturing complex ubiquitination patterns. Hybrid models represent the state-of-the-art, achieving the highest performance metrics by leveraging complementary architectural components and ensemble strategies.

For researchers selecting appropriate architectures, we recommend CNN-based approaches for preliminary studies with limited data or computational resources. ResNet architectures are ideal for detailed investigations requiring high accuracy on complex datasets. Hybrid models should be employed for production-grade prediction tools where maximum performance is essential. Future directions include developing unified frameworks for multiple PTM predictions, incorporating protein structural information, and creating more interpretable models that provide biological insights beyond prediction accuracy.

The integration of these deep learning approaches with experimental validation will accelerate our understanding of ubiquitination mechanisms and facilitate the development of targeted therapies for ubiquitination-related diseases.

The effective identification of ubiquitination sites is a critical step in deciphering the molecular mechanisms of protein regulation and their roles in diseases such as cancer and neurological disorders. While experimental methods like mass spectrometry exist, they are often time-consuming, expensive, and labor-intensive [40] [41]. Computational prediction methods have emerged as indispensable alternatives, with feature engineering representing the fundamental component that determines their success. This guide provides a systematic comparison of feature engineering strategies—sequence-based, structural, and physicochemical properties—for ubiquitination site prediction, offering researchers a framework for selecting and implementing these approaches within their ubiquitination research workflows.

Comparative Analysis of Feature Engineering Strategies

The table below summarizes the core characteristics, advantages, and limitations of the three primary feature engineering strategies used in ubiquitination site prediction.

Table 1: Comparison of Feature Engineering Strategies for Ubiquitination Site Prediction

| Strategy | Key Features | Representative Tools | Performance Highlights | Advantages | Limitations |

|---|---|---|---|---|---|

| Physicochemical Properties (PCPs) | Hydrophobicity, polarity, charge, and other biochemical attributes of amino acids [40]. | UbiPred [40] [24], ESA-UbiSite [24] | UbiPred: 84.44% accuracy (LOOCV) using 31 informative PCPs [40]. | High interpretability; captures direct biochemical context; effective even with smaller datasets [40] [41]. | Requires feature selection to avoid redundancy from 500+ properties [40] [42]. |

| Sequence-Based Features | Amino acid composition (AAC), k-spaced amino acid pairs (CKSAAP), pseudo amino acid composition (PseAAC) [24]. | CKSAAP_UbSite [24], Ubigo-X [24] | Ubigo-X (ensemble): AUC 0.85 on balanced test data [24]. | Simple to compute; does not require structural data; effective for deep learning models [24]. | Lacks 3D structural context; may miss structural determinants of ubiquitination. |

| Structural & Evolutionary Information | Secondary structure, solvent accessibility, evolutionary conservation from PSSM [43] [44]. | SSUbi [43] [44], TransDSI [45] | SSUbi: Enhanced accuracy for species with small sample sizes [43] [44]. TransDSI: AUROC 0.83 for DUB-substrate interaction prediction [45]. | Captures crucial spatial and evolutionary constraints; improves model generalizability [43] [45]. | Structural data not always available; computationally intensive to generate [43] [44]. |

Detailed Methodologies and Experimental Protocols

Protocol 1: Informative Physicochemical Property Mining

The UbiPred protocol exemplifies a rigorous approach to selecting the most informative PCPs from a large pool of candidates [40].

- Data Preparation: Construct a benchmark dataset of protein sequences containing confirmed ubiquitylation sites (positive samples) and non-ubiquitylation sites (negative samples) from databases like UbiProt.

- Feature Extraction: For each lysine residue and its surrounding sequence window, calculate values for all 531 physicochemical properties from the AAindex database. The value for a property across the window is typically the average of that property's values for all amino acids in the window [41].

- Feature Selection: Implement an algorithm like the Informative Physicochemical Property Mining Algorithm (IPMA). This method uses a bi-objective genetic algorithm to select a subset of properties that maximizes the prediction accuracy of a classifier (e.g., SVM) via cross-validation [40].

- Model Training and Validation: Train a Support Vector Machine (SVM) classifier using the selected subset of PCPs. Validate the model using rigorous methods like Leave-One-Out Cross-Validation (LOOCV) and evaluate performance with metrics such as accuracy and Area Under the ROC Curve (AUC) [40]. The study achieved 72.19% accuracy using all 531 properties, which was improved to 84.44% after selecting an informative subset of 31 properties [40].

Protocol 2: Integrating Sequence and Structural Information

The SSUbi model demonstrates a modern deep-learning approach that integrates multiple data types for species-specific prediction [43] [44].

- Data Curation and Species-Specific Partitioning: Collect ubiquitination site data from resources like the Protein Lysine Modification Database (PLMD). Partition the data into species-specific sets (e.g., Homo sapiens, Mus musculus) to train dedicated models [44].

- Multi-Dimensional Feature Extraction:

- Sequence Feature Extraction: Encode sequences using methods like one-hot encoding or k-mer composition [24].

- Structural Feature Prediction: Use tools like NetSurfP-3.0 to predict secondary structure features (helix, strand, coil) and solvent accessibility (RSA/ASA) for each amino acid in the sequence [44].

- Feature Integration and Model Training: Develop a deep learning architecture with separate sub-modules for sequence and structural features. SSUbi uses convolutional operations and a channel attention mechanism to extract multi-dimensional features from both data types. These features are then integrated and fed into a capsule network for final classification [43] [44].

- Performance Evaluation: Evaluate the model on held-out test sets for each species, reporting metrics like AUC, accuracy, and MCC. Compare its performance against general prediction models to demonstrate the advantage of the species-specific, structure-integrated approach [44].

Protocol 3: Deep Transfer Learning for Interaction Prediction

The TransDSI framework addresses the challenge of predicting Deubiquitinase-Substrate Interactions (DSIs) with limited training data [45].

- Network Construction: Represent the human proteome as a Sequence Similarity Network (SSN), where proteins are nodes and edges represent significant sequence similarity based on BLAST scores [45].